After

Before

Key Features

- Deep learning based RNS

- Small footprint and low MIPS

- All sample rates are supported (eg. 8Khz/16Khz/48Khz)

- Search & Repair

- Works with single MIC

- Optimized for high-performance end-to-end voice pipelines

Uniqueness

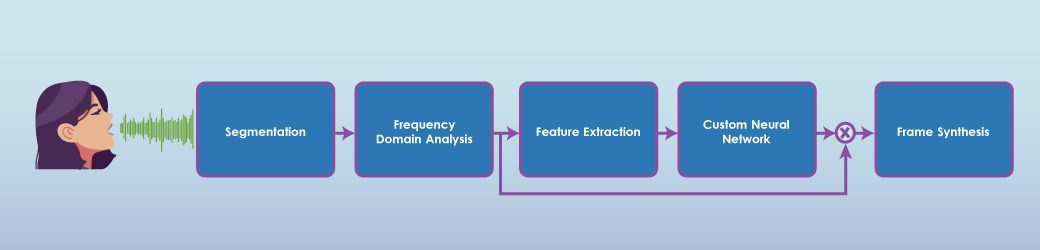

Custom Deep Neural Network

Works for Stationary & non-Stationary noise

Low latency (<25 ms)

Scalable from MCUs to FPGAs to SoCs

Use cases

Septra

Communication Devices

(SmartPhone, Walkie-talkie, VOIP, wearables)

Septra

Teleconferencing

Septra

Human to Machine Communication

Demos

Real-Time Noise Suppression

over

a VOIP call

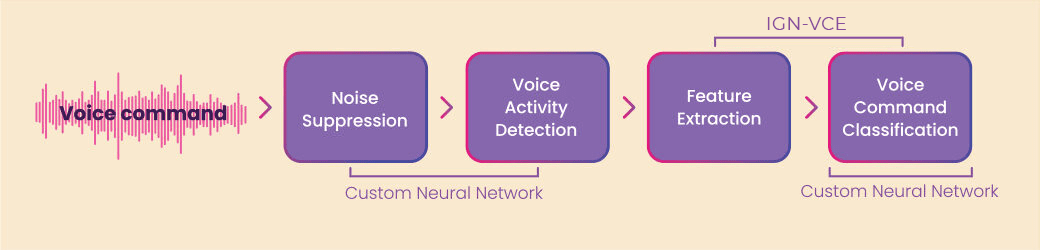

Key Features

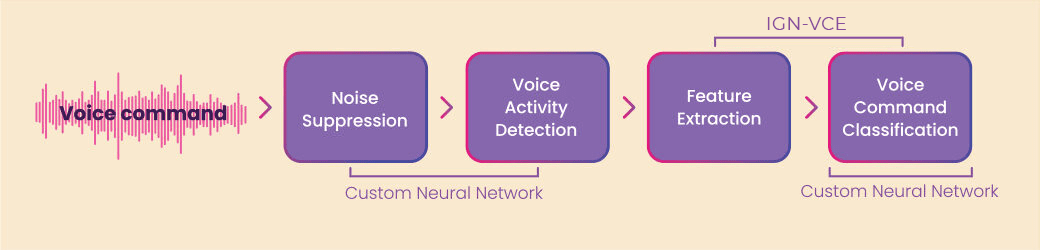

- Edge-based Voice Command recognition engine (No Internet connection required)

- Deep Learning based algorithm

- With Wake-word support

- High recognition rate in noisy environment

- Supports multiword voice commands (1 to 3 words in single command)

- Ultra low memory footprint (<38 KB RAM)

- Very low MCPS (<70 MHz on Cortex M4 CPU)

- Support for multiple languages

Uniqueness

Accuracy

Works well in noisy environments. Coupled with our noise suppression engine, recognition rates higher than 95% are consistently achieved.

Requires Minimal Voice Samples

Our unique audio data preparation technology expands a minimal set of original voice samples to a synthetic dataset that is orders of magnitude larger. This data preparation tool is part of user software and allows infield training.

Enabling “Tiny ML” class of applications

Our AI solutions are designed specifically for low-cost, low-power edge devices built using MCU, DSP and FPGA. With ultra-low memory footprint, customer applications have access to more RAM.

Use cases

Septra

Smart Home

Septra

Automotive

Septra

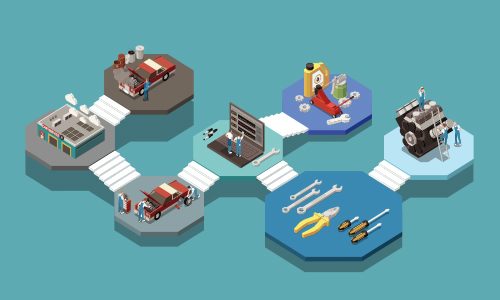

Industrial equipment

Septra

Speakers & Headphones

Septra

Audio-video devices

Case Studies

Demos

Voice Command Recognition on Renesas MCU

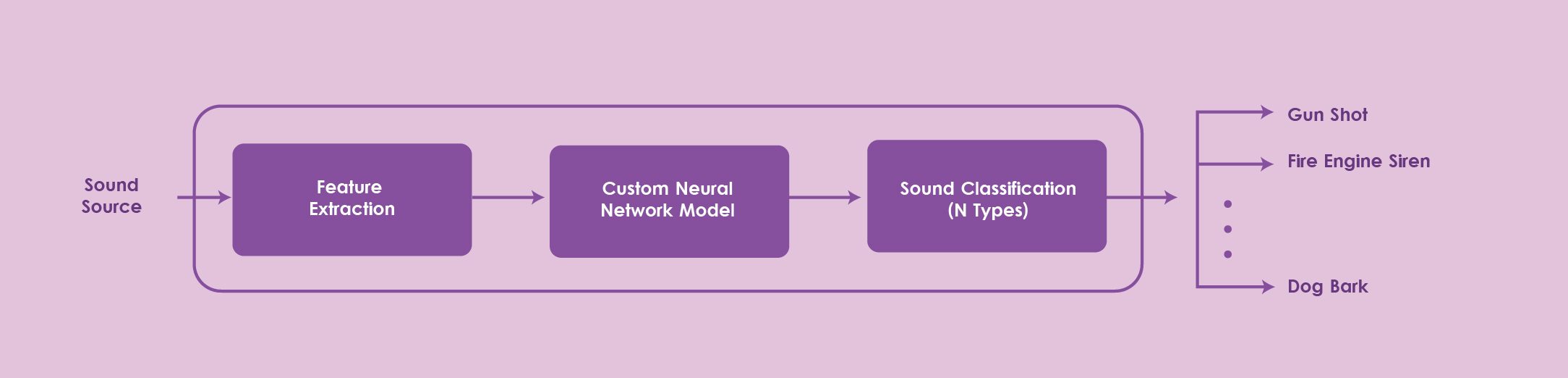

Sound Type Identification

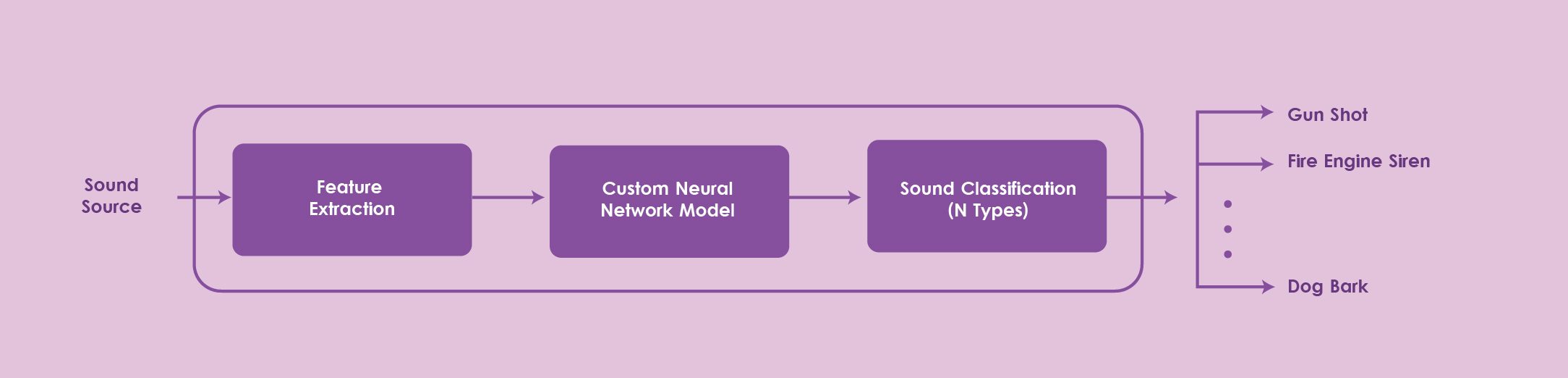

IGN-SEC enables the classification of ambient sound allowing precise identification of various sound types. The underlying algorithms are accurate enough to discriminate between very similar sound types (eg. two different sirens, the bark of two different dog breeds, etc.)

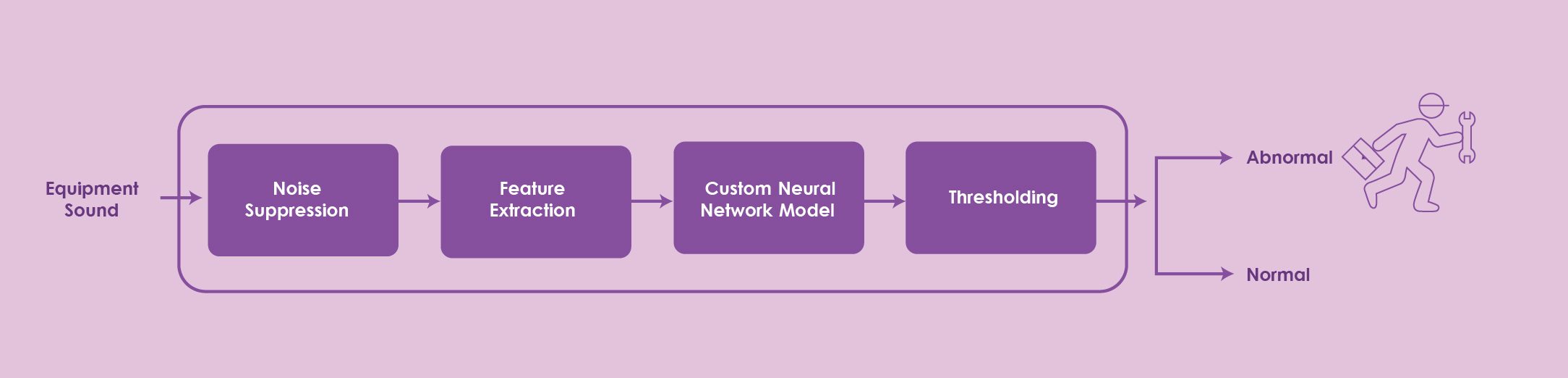

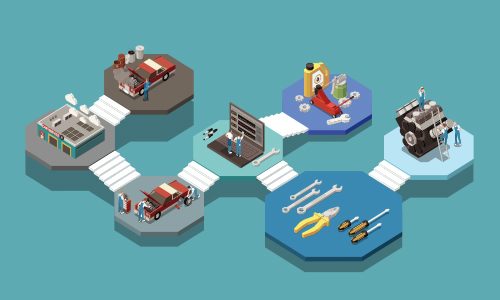

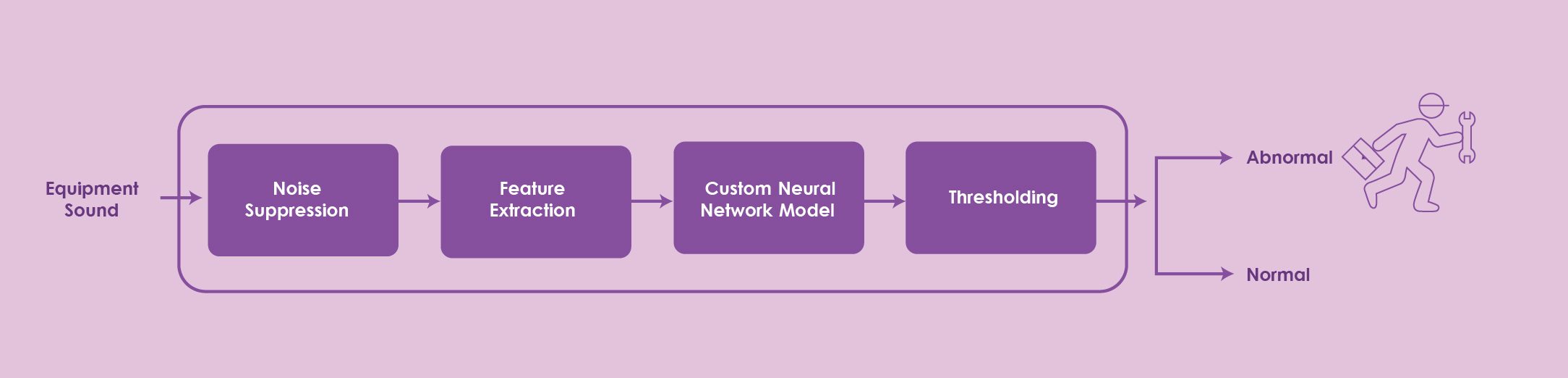

Anomalous Sound Detection:

Anomalies in operation of equipments & infrastructure can be caught early on, by analysing the sounds picked up by microphones installed on or close to the equipment. IGN-SEC then categorizes the picked-up audio as normal or abnormal, allowing early failure prediction of these machines.

Markets:

Automotive

Consumer Electronics

Industrial

Surveillance

After

Before

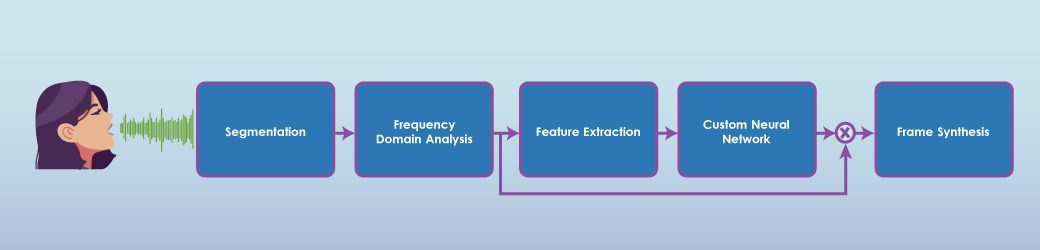

Key Features

- Deep learning based RNS

- Small footprint and low MIPS

- All sample rates are supported (eg. 8Khz/16Khz/48Khz)

- Search & Repair

- Works with single MIC

- Optimized for high-performance end-to-end voice pipelines

Uniqueness

Custom Deep Neural Network

Works for Stationary & non-Stationary noise

Low latency (<25 ms)

Scalable from MCUs to FPGAs to SoCs

Use cases

Septra

Communication Devices

(SmartPhone, Walkie-talkie, VOIP, wearables)

Septra

Teleconferencing

Septra

Human to Machine Communication

Demos

Real-Time Noise Suppression over a VOIP call

Key Features

- Edge-based Voice Command recognition engine (No Internet connection required)

- Deep Learning based algorithm

- With Wake-word support

- High recognition rate in noisy environment

- Supports multiword voice commands (1 to 3 words in single command)

- Ultra low memory footprint (<38 KB RAM)

- Very low MCPS (<70 MHz on Cortex M4 CPU)

- Support for multiple languages

Uniqueness

Accuracy

Works well in noisy environments. Coupled with our noise suppression engine, recognition rates higher than 95% are consistently achieved.

Requires Minimal Voice Samples

Our unique audio data preparation technology expands a minimal set of original voice samples to a synthetic dataset that is orders of magnitude larger. This data preparation tool is part of user software and allows infield training.

Enabling “Tiny ML” class of applications

Our AI solutions are designed specifically for low-cost, low-power edge devices built using MCU, DSP and FPGA. With ultra-low memory footprint, customer applications have access to more RAM.

Use cases

Septra

Smart Home

Septra

Automotive

Septra

Industrial equipment

Septra

Speakers & Headphones

Septra

Audio-video devices

Demos

Voice Command Recognition on Renesas MCU

Sound Type Identification

IGN-SEC enables the classification of ambient sound allowing precise identification of various sound types. The underlying algorithms are accurate enough to discriminate between very similar sound types (eg. two different sirens, the bark of two different dog breeds, etc.)

Anomalous Sound Detection:

Anomalies in operation of equipments & infrastructure can be caught early on, by analysing the sounds picked up by microphones installed on or close to the equipment. IGN-SEC then categorizes the picked-up audio as normal or abnormal, allowing early failure prediction of these machines.

Markets:

Automotive

Consumer Electronics

Industrial