- Harsh Vajpayee

- January 22, 2025

Vision LLMs: Bridging the Gap Between Vision and Language

Abstract

Vision Large Language Models (Vision LLMs) represent a groundbreaking fusion of computer vision and natural language processing, enabling machines to seamlessly understand, generate, and reason across visual and textual modalities. These models, such as CLIP, DALL·E, Flamingo, and Stable Diffusion, power real-world applications ranging from text-to-image generation and multimodal search to healthcare diagnostics and autonomous systems. Leveraging advanced architectures like contrastive learning, cross-attention mechanisms, and diffusion models, Vision LLMs bridge the semantic gap between words and images. Despite their transformative capabilities, challenges such as high computational costs, multimodal data alignment, and ethical concerns persist. However, their potential in fields like healthcare, education, entertainment, and e-commerce underscores their role as a cornerstone in the next era of AI-driven innovation.

Introduction

The field of Artificial Intelligence has undergone remarkable transformations, with innovations spanning natural language processing (NLP) and computer vision. Historically, these two domains evolved as distinct silos—one focused on understanding and generating text, the other on processing visual data. However, the advent of Vision-Language Models (Vision LLMs) has revolutionized AI, blending these domains to create systems that can see, read, and reason.

Vision LLMs enable machines to interpret the world in a multimodal manner, much like humans do. By understanding visual inputs (e.g., images or videos) alongside textual data, these models unlock powerful applications: from generating detailed image captions to answering questions based on visual scenes and even creating stunning art from textual descriptions.

For instance, imagine describing a scene as “a dog running in a field with mountains in the background” and having a model generate a corresponding image. Or consider an AI assistant that can analyze a diagram and provide answers to your questions about it. These are no longer futuristic dreams, but real capabilities enabled by Vision LLMs.

The integration of vision and language in AI is transforming industries like healthcare, autonomous systems, entertainment, and e-commerce, while opening new frontiers in creative expression and human-computer interaction. In this blog, we’ll explore how Vision LLMs work, what makes them special, and their potential to reshape our world.

What are Vision LLMs?

Vision LLMs (Vision-Language Models) are AI systems designed to jointly process and reason over visual and textual data. Unlike traditional models that focus on a single modality—either understanding images (e.g., object detection) or language (e.g., text summarization)—Vision LLMs combine the strengths of both.

These models are built to answer fundamental challenges:

- How do we make machines understand the connection between what they “see” and what they “read”?

- How can models generate meaningful responses by fusing visual and textual context?

Core Architecture of Vision LLMs

Vision LLMs main components:

- Vision Encoders:

- These are neural networks (e.g., ResNet, Vision Transformers, or Convolutional Neural Networks) designed to process visual data such as images or video frames.

- They transform the image into a semantic feature representation—a numerical encoding that captures important visual information like objects, colors, and spatial relationships.

- Language Models (LLMs):

- Pretrained on massive textual data, these models (e.g., GPT, BERT, LLaMA) excel at understanding and generating human language.

- They convert textual inputs into embeddings, a format that machines can understand for processing natural language.

- Multimodal Fusion Layer:

- The core innovation in Vision LLMs lies in the fusion mechanism, where visual and textual features are merged. This layer enables the model to align and reason over multimodal data.

- The core innovation in Vision LLMs lies in the fusion mechanism, where visual and textual features are merged. This layer enables the model to align and reason over multimodal data.

For example, if the text input is “A man riding a bicycle in the rain” and the image shows such a scene, the model aligns the phrase “man riding” with the human figure, “bicycle” with the object, and “rain” with the environmental context.

Training Vision LLMs

Vision LLMs rely on large-scale multimodal datasets containing paired visual and textual data. The training typically follows these steps:

- Pretraining: Models are trained to match images with their corresponding textual descriptions using tasks like contrastive learning or masked token prediction.

- Fine-tuning: Specialized datasets are used to adapt the model to specific applications like visual question answering (VQA), captioning, or text-to-image generation.

Why Are Vision LLMs Important?

Humans interpret the world by combining multiple sensory modalities, like sight and language. Vision LLMs attempt to replicate this holistic understanding, which is crucial for solving real-world problems. They provide:

- Better Understanding: By linking images and text, Vision LLMs can reason beyond single-modal models.

- Generative Abilities: Models like DALL·E can create entirely new visuals from text.

- Improved Accessibility: These models can aid visually impaired individuals by describing images or helping with navigation.

Training Strategies for Vision LLMs

Training Vision LLMs requires large datasets and specific optimization techniques to balance multimodal learning.

1. Multimodal Dataset Preparation

Training data typically includes paired examples of images and text.

Examples:

- Common Crawl or Web Data: Large-scale web scrapes with images and alt text (e.g., CLIP and DALL·E).

- Specialized Datasets: MS-COCO (captioning), Visual Genome (QA), and ImageNet (object recognition).

2. Pretraining Phase

- Objective: Learn general-purpose representations of text and vision.

- Tasks:

- Contrastive learning: Match paired text and images (e.g., “a dog playing in a park”).

- Multimodal masking: Hide parts of text or image embeddings and predict missing elements.

- Infrastructure: Requires distributed training across GPUs or TPUs due to the large-scale data.

3. Fine-Tuning Phase

- Objective: Adapt the pretrained model to specific tasks, such as VQA or text-to-image generation.

- Techniques:

- Supervised Fine-Tuning: Use labeled data specific to the application.

- Few-Shot Learning: Use a small dataset of examples to guide the model (common for LLMs like GPT-4).

- Instruction Tuning: Teach the model to follow multimodal instructions, enabling conversational agents.

- Supervised Fine-Tuning: Use labeled data specific to the application.

Technical Details of Vision LLMs

Vision LLMs rely on innovative architectures and techniques to process and fuse multimodal data. Here’s a breakdown of their core components and functioning:

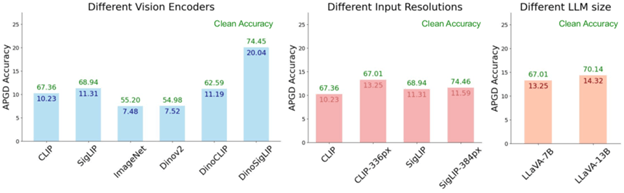

1. Vision Encoder

The vision encoder extracts meaningful features from visual data such as images or video frames. Common approaches include:

- Convolutional Neural Networks (CNNs): Legacy models like ResNet are used for feature extraction.

- Vision Transformers (ViTs): Modern vision encoders like ViTs treat images as sequences of patches and process them using self-attention mechanisms, making them more flexible for multimodal tasks.

- Pretrained Feature Extractors: Models like CLIP use pretrained encoders like ViT or EfficientNet to process images into fixed-size embeddings.

2. Language Model

The language model processes text input, such as captions, questions, or prompts. Examples include:

- Transformer-Based Models: Models like GPT, BERT, or LLaMA are widely used for textual understanding.

- Tokenization: Text is broken into tokens, embedded, and processed using self-attention to understand context and meaning.

3. Multimodal Fusion

This is the heart of Vision LLMs, where features from the visual and textual modalities are combined. Fusion can happen:

- Early Fusion: Raw pixel data and textual tokens are fused in initial layers. This approach requires large computational resources.

- Late Fusion: Visual and textual embeddings are processed separately and combined in higher layers. This is computationally efficient but may lose some cross-modal context.

- Cross-Attention Mechanisms: Many models, like Flamingo, use cross-attention layers to align text and vision representations.

4. Learning Objectives

Training Vision LLMs involves multimodal learning objectives:

- Contrastive Learning: Pairs of images and text are trained to maximize similarity when matched and minimize similarity when mismatched (e.g., CLIP).

- Masked Token Prediction: Inspired by BERT, the model predicts missing parts of text or visual embeddings, forcing it to learn relationships between modalities.

- Generative Learning: Models like DALL·E generate new data (images or text) based on cross-modal inputs.

5. Architectures in Use

- CLIP: Two separate encoders for text and vision are trained with a contrastive loss.

- DALL·E: Uses a Transformer-based decoder for text-to-image generation.

- Flamingo: Combines frozen LLMs with vision encoders and adds cross-attention layers for multimodal input.

Training Challenges in Vision LLMs

Training Vision LLMs is a complex task that demands careful consideration of multimodal data, computation, and alignment.

1. Dataset Challenges

- Multimodal Data Collection:

- Requires large datasets where images and text are meaningfully paired.

- Issues: Noise in web-scraped data, lack of domain-specific datasets (e.g., medical or industrial).

- Data Bias:

- Models may learn biased associations (e.g., gender stereotypes in text or racial biases in images).

2. Compute Requirements

- High Computational Cost:

- Vision LLMs require massive computational resources, often running on supercomputers with thousands of GPUs or TPUs.

- Example: Training a model like DALL·E or CLIP can take weeks, even on state-of-the-art infrastructure.

3. Cross-Modal Alignment

- Semantic Misalignment:

- Aligning visual features (e.g., object shapes) with abstract textual concepts (e.g., “love” or “beauty”) is non-trivial.

- Aligning visual features (e.g., object shapes) with abstract textual concepts (e.g., “love” or “beauty”) is non-trivial.

- Attention Bottlenecks:

- Ensuring the model attends to relevant parts of the image or text without overfitting to irrelevant details.

4. Scalability

- Memory Limitations:

- Vision LLMs deal with high-dimensional data (pixels and text tokens), which can quickly exceed GPU memory.

- Vision LLMs deal with high-dimensional data (pixels and text tokens), which can quickly exceed GPU memory.

- Training Stability:

- Multimodal training can be unstable, requiring careful tuning of learning rates, batch sizes, and loss functions.

5. Evaluation Metrics

- Subjective Quality:

- Judging the quality of generated images or textual responses is often subjective. Metrics like FID (Fréchet Inception Distance) or BLEU scores are not always reliable.

- Judging the quality of generated images or textual responses is often subjective. Metrics like FID (Fréchet Inception Distance) or BLEU scores are not always reliable.

- Task-Specific Metrics:

- Evaluating Vision LLMs for domain-specific tasks (e.g., medical diagnostics) requires specialized metrics and datasets.

6. Ethical and Societal Concerns

- Misuse Potential:

- Text-to-image models can generate deepfakes or misleading visuals, raising ethical concerns.

- Text-to-image models can generate deepfakes or misleading visuals, raising ethical concerns.

- Sustainability:

- Training Vision LLMs consumes vast energy resources, impacting carbon footprints.

Real-World Applications of Vision LLMs

Vision LLMs have unlocked a wide range of real-world use cases across multiple industries by combining visual and textual understanding. Here are some key applications:

1. Healthcare

- Medical Diagnostics:

- Vision LLMs integrate X-rays, MRIs, or CT scans with patient notes to improve diagnostic accuracy.

Example: Identifying lung abnormalities in X-rays based on radiology reports.

- Medical Image Captioning: Automatically generate descriptions for medical images to assist radiologists.

Example: “A 2 cm lesion detected in the left kidney.” - Surgical Guidance: Real-time analysis of surgical video feeds and overlaying textual instructions.

2. Retail and E-Commerce

- Multimodal Search: Customers can combine text and images to search for products.

- Example: Uploading a photo of a red dress and typing “with floral prints” to find similar items.

- Example: Uploading a photo of a red dress and typing “with floral prints” to find similar items.

- Virtual Try-Ons: Generate realistic product visuals for online try-ons (e.g., clothing or eyewear).

- Content Moderation: Detect inappropriate content in product listings (e.g., harmful images paired with false descriptions).

3. Autonomous Systems

- Self-Driving Cars: Fuse visual data from cameras with textual data from road signs or navigation instructions.

- Example: Interpreting “Speed Limit 50” in road scenes to guide autonomous driving.

- Example: Interpreting “Speed Limit 50” in road scenes to guide autonomous driving.

- Drones: Drones with Vision LLMs can combine satellite imagery with textual mission instructions for surveillance or rescue operations.

4. Media and Entertainment

- Content Creation: Text-to-image generation for creating artwork, movie posters, and animations.

- Example: Using DALL·E to design fantasy landscapes for a video game.

- Example: Using DALL·E to design fantasy landscapes for a video game.

- Automatic Captioning: Generating captions for photos, videos, or live streams for accessibility.

5. Education and Training

- Visual Explanations: Generate diagrams or illustrations to complement textual learning materials.

- Example: Creating an image to explain “the water cycle” based on text input.

- Example: Creating an image to explain “the water cycle” based on text input.

- Interactive Learning: Intelligent tutors that process both diagrams and text to answer questions.

6. Security and Surveillance

- Behavior Analysis: Recognize suspicious activities by analyzing visual feeds and contextual text (e.g., CCTV footage paired with reports).

- Biometric Identification: Verify identity using multimodal inputs like facial scans and textual records.

7. Art and Design

- Generative Art: Artists can use text-to-image Vision LLMs to quickly prototype ideas.

- Example: “Generate an abstract painting in Van Gogh’s style.”

8. Customer Support

- Visual AI Assistants: Chatbots equipped with Vision LLMs can process images sent by users to answer questions.

- Example: “Why is my printer not working?” alongside an image of the printer’s error screen.

Text-to-Image Generation

Text-to-image generation is one of the most transformative applications of Vision LLMs, enabling AI to create visually realistic or imaginative content from textual descriptions. This capability bridges the gap between natural language and visual artistry.

How Text-to-Image Models Work

Text-to-image generation involves translating a textual description into a coherent, high-quality image. Models like DALL·E, Stable Diffusion, and Imagen have set benchmarks in this domain.

- Input: A textual description such as “A futuristic cityscape at sunset with flying cars.”

- Output: A generated image depicting the described scene.

Key Components

- Text Encoder:

- A pretrained language model (e.g., BERT or GPT) converts text into latent embeddings that capture semantic meaning.

- A pretrained language model (e.g., BERT or GPT) converts text into latent embeddings that capture semantic meaning.

- Image Generator:

- Models like Diffusion Models or GANs (Generative Adversarial Networks) decode the latent representation into pixel data.

- Models like Diffusion Models or GANs (Generative Adversarial Networks) decode the latent representation into pixel data.

- Cross-Attention Mechanism:

- Enables alignment between text and visual features, ensuring that the generated image matches the textual input.

- Enables alignment between text and visual features, ensuring that the generated image matches the textual input.

- Iterative Refinement:

- Diffusion models start with random noise and iteratively refine it to generate a high-quality image.

Applications in Real-World Use Cases

- Marketing and Design: Generate custom visuals for advertisements or social media.

- Gaming: Create realistic assets like characters, landscapes, or props.

- Entertainment: Design concept art for movies or animation based on textual scripts.

- Education: Generate visuals to accompany learning materials (e.g., scientific illustrations).

Healthcare Applications

Vision LLMs have immense potential in healthcare, where they can integrate textual and visual data to improve diagnostics, accessibility, and patient outcomes.

Key Applications

- Medical Diagnostics:

- Vision LLMs analyze radiology scans (X-rays, MRIs, CT scans) alongside textual medical records to assist in diagnosing conditions.

- Example: Detecting lung anomalies in chest X-rays while correlating findings with patient symptoms.

- Medical Image Captioning:

- Automatically generating descriptions of medical scans to assist radiologists or enable accessibility for non-specialists.

- Example: “A CT scan shows a 5mm nodule in the upper lobe of the right lung.”

- Surgical Assistance:

- Integrating real-time video data from surgery with contextual instructions or warnings.

- Example: Highlighting anatomical landmarks during laparoscopic procedures based on text input.

- Drug Discovery and Research:

- Analyzing biomedical images alongside research literature to identify potential drug targets.

- Analyzing biomedical images alongside research literature to identify potential drug targets.

- Patient Accessibility:

- Assisting visually impaired patients by describing medical charts, prescriptions, or visual aids.

Challenges in Healthcare

- Data Privacy: Ensuring patient data remains secure and anonymized during model training.

- Accuracy: The cost of errors in diagnosis or interpretation is high, requiring rigorous validation.

- Bias: Training data must represent diverse populations to avoid biased outcomes.

Types of Vision LLMs and How They Differ

Vision LLMs come in various forms, each tailored to specific tasks or domains. Below are key types and their differences:

1. CLIP (Contrastive Language-Image Pretraining)

- Description: CLIP uses two separate encoders for text and images and trains them with a contrastive learning objective. It learns to align text and images, ensuring that semantically related pairs are close in the embedding space.

- Strengths:

- Excellent at zero-shot learning (e.g., classifying objects without retraining).

- Fast and efficient at matching text and image pairs.

- Weaknesses:

- Cannot generate images; only works for classification or retrieval.

- Cannot generate images; only works for classification or retrieval.

- Applications:

- Content moderation, image search, and zero-shot classification.

2. DALL·E (Text-to-Image Generation)

- Description: DALL·E is a generative model that creates high-quality images from textual descriptions. It combines text embeddings with diffusion-based generative techniques.

- Strengths:

- Can generate detailed, creative images directly from text prompts.

- Handles abstract or imaginative prompts (e.g., “A panda painting a picture of a sunset”).

- Weaknesses:

- Computationally expensive.

- Lacks fine-grained control over output.

- Applications:

- Marketing, entertainment, and creative industries.

3. BLIP (Bootstrapped Language-Image Pretraining)

- Description: BLIP is a multimodal model designed for image captioning, VQA (visual question answering), and retrieval tasks. It trains in a self-supervised manner, bootstrapping itself using noisy image-text pairs.

- Strengths:

- Strong performance on image captioning tasks.

- Adapts well to domain-specific datasets.

- Weaknesses:

- Limited generative capabilities.

- Limited generative capabilities.

- Applications:

- Accessibility tools, automated content tagging, and VQA systems.

4. Stable Diffusion

- Description: A text-to-image generative model based on latent diffusion. It focuses on creating high-resolution images while being relatively lightweight compared to earlier generative models.

- Strengths:

- Highly efficient for generating detailed images.

- Open-source and customizable.

- Weaknesses:

- Requires careful prompt engineering for fine-tuned outputs.

- Requires careful prompt engineering for fine-tuned outputs.

- Applications:

- Design prototyping, creative projects, and generative art.

5. GIT (Generative Image-Text Models)

- Description: GIT models are trained to generate both text and images, excelling at multimodal generative tasks.

- Strengths:

- Unified framework for generating text captions and visual representations.

- Unified framework for generating text captions and visual representations.

- Weaknesses:

- Lacks specialization in either text or vision.

- Lacks specialization in either text or vision.

- Applications:

- Image captioning and content creation.

6. IDEFICS (Image Decoder Empowered by Fine-Tuning on Commonsense)

- Description: IDEFICS is a multimodal model developed by Hugging Face and LAION, designed for tasks like image captioning, visual question answering (VQA), and creative image-text interactions. It utilizes the Llama language backbone and incorporates multi-stage fine-tuning on large-scale datasets for better alignment between vision and language.

- Strengths:

- Strong commonsense reasoning capabilities.

- Processes high-resolution images.

- Excellent generalization on diverse multimodal tasks.

- Weaknesses:

- Computationally intensive training process.

- Requires large, high-quality multimodal datasets.

- Applications:

- Image captioning for accessibility tools.

- Creative tasks like content generation and storytelling.

- Advanced VQA systems.

7. Kosmos-2

- Description: Kosmos-2, developed by Microsoft Research, is an advanced multimodal model that integrates vision, language, and speech. It builds on Kosmos-1, extending its capabilities to support multilingual and multimodal tasks, including image generation and editing.

- Strengths:

- Handles cross-modal tasks involving text, images, and speech.

- Strong multilingual and multimodal reasoning capabilities.

- Excels in chain-of-thought reasoning for complex tasks.

- Weaknesses:

- High computational requirements for fine-tuning.

- Limited availability of diverse multimodal training data in certain languages.

- Applications:

- Multimodal virtual assistants.

- Interactive systems for education and content creation.

- Multilingual multimodal customer support.

8. Flamingo

- Description: Flamingo by DeepMind specializes in few-shot multimodal learning, combining frozen vision and language models with cross-attention mechanisms. It adapts quickly to new tasks with minimal data.

- Strengths:

- Requires minimal task-specific data for adaptation.

- Flexible for diverse multimodal applications.

- Performs well on real-world datasets.

- Weaknesses:

- May struggle with highly complex reasoning tasks requiring extensive fine-tuning.

- Limited scalability for industrial applications.

- Applications:

- Healthcare diagnostics with image-text integration.

- Educational tools combining text, images, and real-time assistance.

- Interactive multimodal assistants.

9. LLaVA (Large Language and Vision Assistant)

- Description: LLaVA integrates GPT-like conversational abilities with vision understanding, enabling interactive multimodal tasks like VQA and image-based dialogues.

- Strengths:

- Efficient parameter tuning.

- Excels in interactive and reasoning-based tasks.

- Simplified deployment for conversational AI.

- Weaknesses:

- Limited scope for tasks requiring high-resolution image understanding.

- Struggles with highly domain-specific visual datasets.

- Applications:

- Visual customer support.

- Educational tools for interactive learning.

- Creative tasks involving multimodal storytelling.

10. Otter

- Description: Otter is a lightweight multimodal model designed for edge-device deployment, offering efficient visual and textual reasoning capabilities with a focus on accessibility.

- Strengths:

- Highly optimized for low-resource environments.

- Lightweight and deployable on AR/VR devices.

- Retains strong multimodal reasoning capabilities.

- Weaknesses:

- Limited scalability for large-scale tasks.

- Reduced performance on high-complexity multimodal datasets.

- Applications:

- Real-time visual question answering on mobile devices.

- AR/VR tools for interactive environments.

- Edge-based multimodal systems for accessibility.

11. Bard-Visual

- Description: Bard-Visual is Google’s visual extension of its conversational AI Bard. It incorporates visual understanding into conversational tasks like answering questions about images, object identification, and suggestion generation.

- Strengths:

- Seamless integration with text-based conversational flows.

- Supports rich visual reasoning.

- Direct integration with Google’s ecosystem.

- Weaknesses:

- Limited fine-tuning for highly specific visual datasets.

- Dependency on Google’s ecosystem for full utilization.

- Applications:

- General-purpose AI assistants.

- Interactive search queries involving images.

- Visual aids in learning and training platforms.

12. M6 (Multi-Modality-to-Modality)

- Description: M6, developed by Alibaba DAMO Academy, is a unified multimodal framework that supports text-to-image generation, VQA, and image captioning, emphasizing scalability and task generalization.

- Strengths:

- Unified framework for multiple tasks.

- Scalable for industrial applications.

- Multilingual support for diverse global use cases.

- Weaknesses:

- Requires significant computational resources for training.

- May struggle with real-time performance in resource-limited settings.

- Applications:

- E-commerce image generation and personalization.

- Content creation for marketing and media.

- Multimodal search and recommendation systems.

13. GPT-Vision (GPT-4V)

- Description: GPT-Vision (GPT-4V) by OpenAI extends GPT-4’s capabilities to vision tasks, enabling conversational interactions with visual inputs like images and diagrams.

- Strengths:

- Superior conversational multimodal reasoning.

- Strong integration with OpenAI’s ecosystem.

- Excellent performance in visual storytelling and complex reasoning tasks.

- Weaknesses:

- Resource-heavy for deployment at scale.

- Limited task flexibility compared to dedicated vision models.

- Applications:

- Diagram interpretation and visual explanations in education.

- Customer support involving visual input.

- Creative applications like visual storytelling.

Conclusion

Vision LLMs stand at the forefront of multimodal AI, demonstrating unprecedented capabilities in unifying vision and language tasks. From creating lifelike images from textual descriptions to enabling advanced applications in medical diagnostics, content creation, and autonomous systems, these models are reshaping industries. Each type of Vision LLM, from classification-focused CLIP to generative models like DALL·E and Stable Diffusion, addresses unique challenges, unlocking diverse use cases tailored to specific domains. However, the journey is not without obstacles, including the need for extensive computational resources, domain-specific data, and ethical safeguards. As researchers refine architectures and training strategies, Vision LLMs promise to transform how we interact with and interpret the world, setting the stage for the seamless integration of human creativity and AI’s multimodal understanding.