- Mounica Golkonda

- June 8, 2022

Cobots and Vision: Pose estimation for pick and stow operations

Introduction

Collaborative robots or cobots are being adopted in large fulfillment centers to streamline logistics with the intent to improve efficiency right from the stage of procurement to last-mile delivery.

As the global collaborative robot market is forecasted to grow at a Compound Annual Growth Rate (CAGR) of 60% by 2030 [3], cobots are paving the way for collaborative, safe and productive warehouse automation. They are equipped with high degrees of autonomy, efficient navigation, and unrivaled flexible robotic manipulation. These robots can be made to work in conjunction with employees processing bulk orders with zero error in the shortest amount of time.

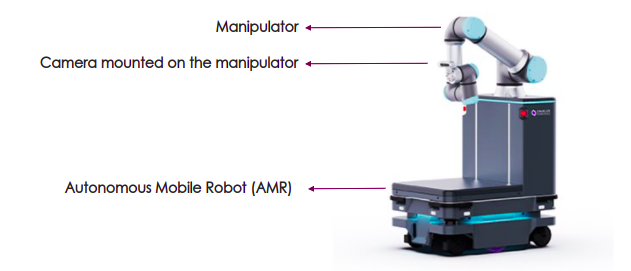

Next Gen AMRs (Autonomous Mobile Robots) – Mobile Cobots

Along with autonomy, navigation, and manipulation capabilities, it is becoming increasingly important to build Computer Vision capability in cobots. Vision helps cobots detect and locate objects, scan QR codes / bar codes and recognize patterns. In a traditional system, objects and obstacles should be presented to a cobot in a structured way. But with computer vision built into cobots, this is no longer necessary. This means the same cobots can handle several types of tasks – a cobot can be assigned to a particular set of tasks in the morning and a separate set in the afternoon. This provides a great degree of flexibility in operating cobots in warehouses and retail fulfilment centers.

Computer Vision for Cobots

Retail fulfilment centers deal with two variations of object picking application.

Pick – Picking an object from the shelf and placing it in a bin

Environmental conditions play a paramount role in the object pick task. The key factors which highly impact the vision module of the picking task are lighting variations on the shelf, width, and depth of the shelf stack area.

Stow – Picking an object from the bin and stowing the same in the desired shelf.

The sheer unstructured environment (where positions of other objects keep on changing every time an object is picked from the bin) leads to inevitable complexities and challenges in the vision module of object stow task.

Along with the above, the challenges in camera placement, object localization and type of objects (variations in size, shape & reflection) are common to both tasks.

To pick an object (irrespective of the tasks specified above), a robot requires the exact location of the object. This data is generated using a 2D depth map created by a stereo vision camera. Camera placement plays a critical role in the effective performance of Vision module and robotic manipulation. The generic factors to consider while choosing the right camera are the enterprise product depth/breadth, lighting, temperature, and other environmental conditions.

Object localization brings in complexities in the case of the latter than the former, because of the involvement of cluttered scenes. The localization procedure involves identifying the location of the desired object in the scene, to facilitate object grasping by the robotic arm.

The object location is calculated in terms of its position and orientation, also called pose estimation. The estimated pose is a critical input for robotic arm automation.

In the below section, you will find a detailed description of the implementation of a pose estimation algorithm.

Pose Estimation

Open-Source Datasets

The retail specific open-source datasets for 3D object pose estimation are not widely available.

We use datasets like LINEMOD and YCB-Video throughout our experiments. These datasets deal with only a few retail objects. Also, we have captured an in-house dataset which includes 3 retail objects like a cereal box, coffee mug and soap box. This dataset capture is facilitated by Intel RealSense D435i camera and consists of images of individual objects and multiple objects in the scene.

Dataset Collection – In-house dataset generation

Pose estimation for every 3D object requires datasets comprising of both RGB and RGB-D images of objects with the corresponding ground truth values and transforms (rotation and translation). The common approach is to use multiple RGB-D sensors and high resolution DSLR cameras. However, such a setup requires a lot of resources and time.

To generate effective ground truth values with low-cost camera set up, we followed an approach of using aruco marker tags. The aruco marker tags are attached to the retail objects and the corresponding rotation and translation matrices are calculated using OpenCV methods with aruco markers.

Deep Learning Approach – Workflow and Results

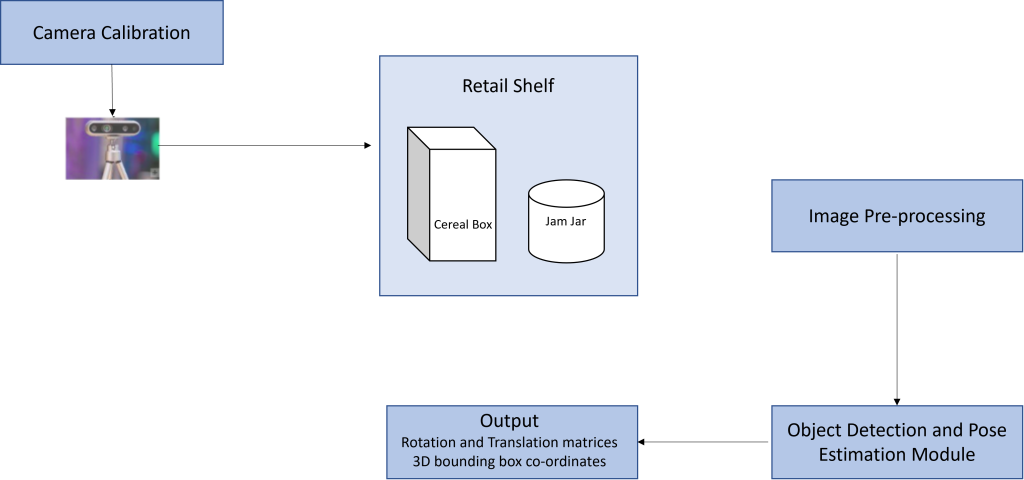

Pose estimation neural networks are widely categorized as pose regressor networks and 2D-3D correspondence networks. We use state-of-the-art pose estimation networks for our evaluation in different datasets. The basic workflow of our approach is as shown in fig 2.

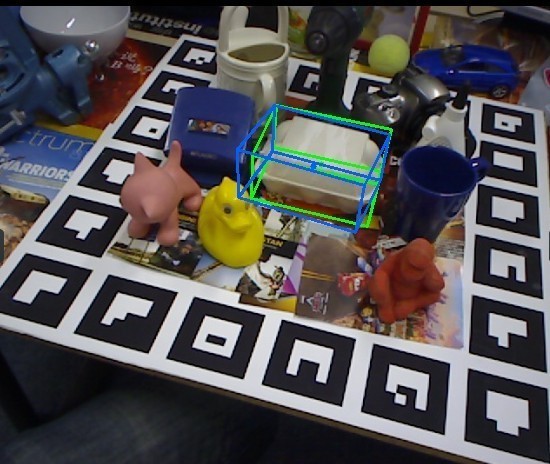

On LINEMOD dataset, for symmetric object (Egg box) and an asymmetric object (Driller), the measured Average Distance Difference (ADD) and translation errors are 0.6235 and 26.01 mm for the former and 0.75 and 17.04 mm for the latter. Below are a few of our visualization results evaluated on our implemented model for LINEMOD dataset.

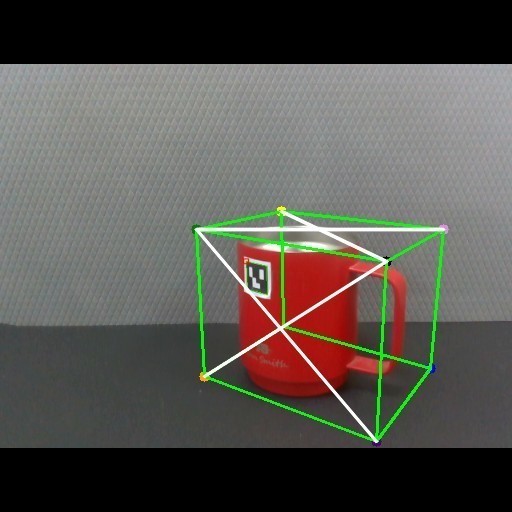

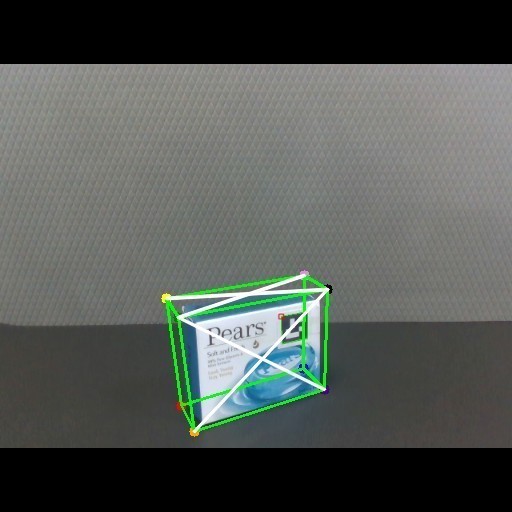

Fig 3: Predicted 3D bounding box(blue) and ground truth(green) for Egg box (Image on left) and Driller (Image on right) on LINEMOD dataset

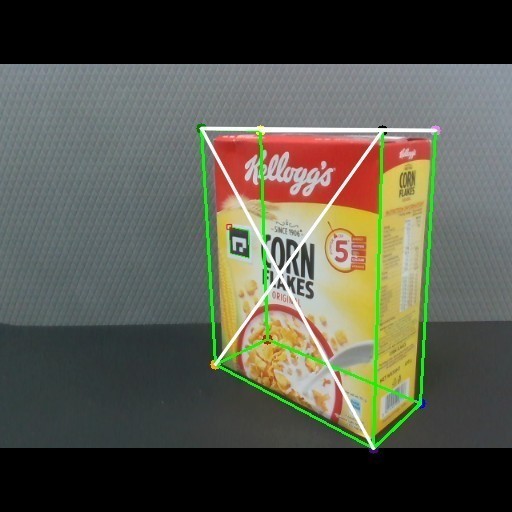

As proof of the generalizability of our deep learning models, we also present the visualization results on our in-house dataset.

Fig 4: Predicted 3D bounding box (in green) for the objects in our in-house dataset

With the ever-increasing SKU (stock keeping units) range, real-time dataset collection procedure has become extremely complicated.

Owing to the data-centric AI approach, high quality data can be artificially generated and labelled. This synthetic data can be used for further training and testing of the model, leading to better overall performance results.

Accelerated mobile cobot deployment using digital twin and synthetic data

Any kind of simulation; whether it is data or a physical entity (fulfillment center floor, in our case) is an inexpensive alternative to real-world procedures. On one hand, synthetic data is the simulation of real-world data for AI models. While on the other hand, digital twin is a cost-effective simulation of physical space, people, and processes. Synthetic data when used in conjunction with digital twin effectively accelerates the validation for robotic systems and thus, eventually leads to predictive maintenance.

Here we briefly shed light on some of the current market-disrupting platforms (listed below) which help in resolving cross-domain (synthetic and real-time) problems.

AWS (Amazon Web Services) Platform and 3D Excite

Dassault Systems 3D Excite in combination with Amazon SageMaker, render 3D images of objects and annotate the data, respectively. Thus, a newly generated dataset can be effectively stored in Amazon Simple Storage Service (Amazon S3). Finally, Amazon Rekognition Custom labels imports the training dataset from storage and facilitates testing of the model with real-time images [1].

Unity3D

Effective synthetic dataset generation involves automatic scene generation with variations of lighting, object hue, blur, and noise. Unity Perception package helps in handling the placement & orientation of the object, desired scene generation with different variations and background environment [2].

Conclusion

As cobots are being touted as the next wave in warehouse automation, the need for computer vision in mobile cobots is a necessary technology for automating tasks like pick and stow, packaging and many others in warehouses which (otherwise) would have simply not been possible.

With the advent of mobile cobots in retail fulfillment centers, there is increased traction for expertise in Robotics in collaboration with AI (Artificial Intelligence).

With our expertise in 3D computer vision, mobile cobot navigation and manipulation, and synthetic dataset generation, we continue to work alongside robotics companies to adapt cobots for different use cases.

References

[4] Manipulator Image source: https://www.mobile-industrial-robots.com/mirgo/robot-arms/

[7] https://standard.ai/blog/standard-sim-a-synthetic-dataset-for-retail-environments/