- Swathi G

- December 7, 2022

Deep-Learning based Wall crack detection

1. Introduction

Cracks become a cause for concern when it comes to the safety, durability and structural integrity of building structures. When cracks develop and propagate, they tend to cause a reduction in the effective load bearing area, which, in turn, brings about an increase in stress and subsequently failure of concrete structures. Additionally, the presence of cracks can create a conduit for harmful and corrosive elements to penetrate the structure, which further damages their physical integrity, not to mention, the general appearance of the structure.

The task of crack detection on buildings is currently largely a manual process. Since large surface areas have to be visually inspected, this is highly inefficient in terms of time and cost. Additionally, the accuracy of defect detection is also dependent heavily on the subjective judgement of a human inspector.

Ignitarium’s TYQ-i® platform enables the automated analysis of anomalies in a host of infrastructure assets by employing image processing techniques and deep learning algorithms. In this article, we describe the use of one of the library components that is employed for detecting cracks on the surface of walls and other building related structures.

The approach taken to address the wall crack detection use case is described in subsequent sections:

- Dataset preparation

- Labeling

- Image processing and AI model selection

2. Dataset Preparation

The first step in the development process is to collect a suitable set of data that is relevant to the problem statement at hand. A large corpus of image data was collected keeping in mind the following general criteria:

- Negative images (wall surfaces without cracks)

- Positive images (wall surfaces with cracks)

- Images from various angles

- Images with different lighting conditions

- Partially occluded wall surfaces

- Discontinuous / irregular wall surfaces

- Varying crack types (length, width and shape of cracks)

These were collected and organized into structured data folders in line with the TYQ-i development flow processes.

3. Labeling

Any AI-based solution requires accurate labeling. We used our custom modified LabelMe tool and our internal annotation pipeline to generate a set of highly segregated label folders, each containing high quality label crops for various categories of canvas (background) and artefact (cracks).

4. Image Processing and AI model application

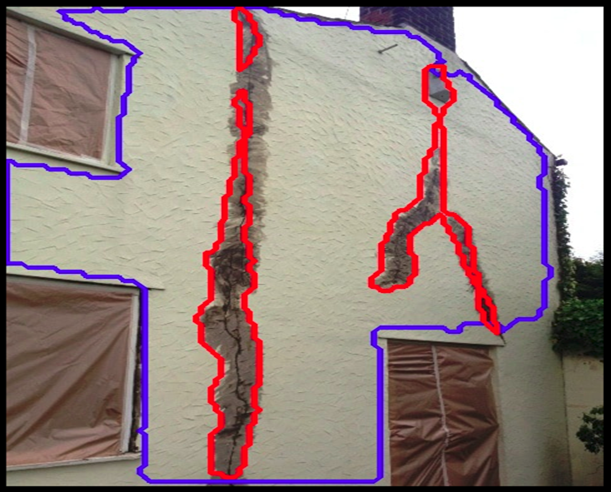

The front end of the software is an image processing pipeline that applies transformations to the input image to convert it into a format that is ingestible by the AI model. The approach to solve the problem was to use a segmentation network in order to identify the presence of cracks on the wall. Using the mask images obtained from the in-house labeling application, training was carried out. Both the wall and crack detection were trained using a modified MobileNet-UNET architecture. Initially, concrete walls in the frame were detected and those wall detections were used for detecting cracks on the wall. This was done by setting the wall detections as a parent contour and cracks as child contours in our AI pipeline.

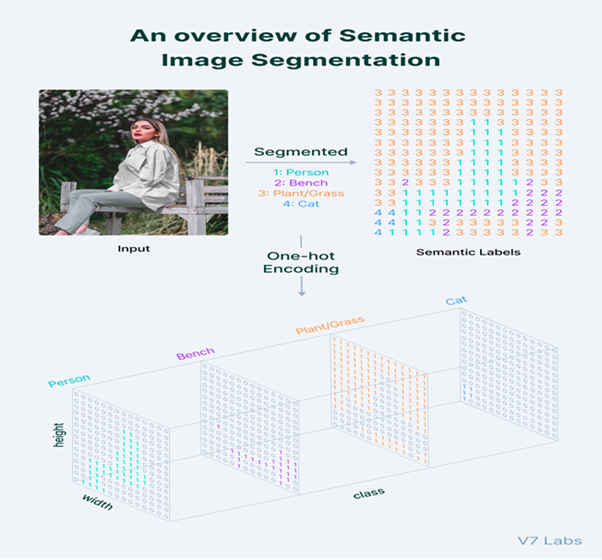

4.1 Semantic Segmentation

Semantic Segmentation follows three steps:

- Classifying: Classifying a certain object in the image.

- Localizing: Finding the object and drawing a bounding box around it.

- Segmentation: Grouping the pixels in a localized image by creating a segmentation mask.

The task of Semantic Segmentation can be referred to as classifying a certain class of image and separating it from the rest of the image classes by overlaying it with a segmentation mask. It can also be thought of as the classification of images at a pixel level.

4.2 Mobilenet-UNET

We used the meta-architecture of MobileNet-UNET in our pipeline; this uses depth-wise separable convolutions allowing the creation of a lightweight Convolutional Neural Network. Depth-wise separable convolution is widely employed in real-time tasks due to two reasons: lesser parameters need to be adjusted compared with classical convolution layers, resulting in the reduction of over-fitting possibilities; additionally, they are computationally less intensive, allowing easy usage within high-speed vision applications.

5. Accuracy

The overall software pipeline was able to achieve an accuracy measure of 97.5%

6. Results

The images shown below are from the output of the imaging plus AI software pipeline showing post-inference results. The blue outline shows the wall (or parent contour) while the red outlines show the detected cracks. Note that the software solution can handle plain walls as well as walls with various kinds of discontinuities (eg. Windows, doors, etc.).

7. Conclusion

The task of wall crack detection was solved using customized detectors that form part of the TYQ-i defect detection platform. The same software component can be used for addressing numerous other related use cases such as detection of defects on bridges, tunnels and more.

8. References

1. https://www.v7labs.com/blog/semantic-segmentation-guide

2. https://www.researchgate.net/publication/341749157_Mobile-Unet_An_efficient_convolutional_neural_network_for_fabric_defect_detection