- Nirmal K A

- May 11, 2023

Perceiving the World in-’Depth’ with 3D LiDAR

1. Introduction

Simultaneous Localization and Mapping (SLAM) is a technology used in robotics and autonomous systems to create maps of unknown environments while simultaneously tracking the location of the robot within that environment. Technology has revolutionized robotics, enabling robots to operate autonomously in complex and dynamic environments where accurate mapping and positioning are critical. With the advancements in sensor technology and machine learning algorithms, the possibilities of SLAM have significantly increased in recent years, leading to the development of new applications and use cases.

One of the latest and most exciting developments in SLAM technology is the use of 3D LiDAR sensors. Unlike 2D LiDAR sensors, which only provide information about the distance and angle of objects in a plane, 3D LiDAR sensors can capture the full three-dimensional structure of an environment. This offers significant advantages in SLAM applications, allowing for more accurate and detailed maps of the environment, as well as improved localization and tracking capabilities. Additionally, 3D LiDAR SLAM can better handle dynamic environments, where changes in the environment occur frequently, and traditional 2D LiDAR SLAM may struggle to keep up. The use of 3D LiDAR sensors has opened new possibilities for robotics and autonomous systems, enabling them to operate in more complex and dynamic environments with greater accuracy and efficiency.

While 3D LiDAR SLAM can solve a lot of issues in mapping a localization, it does come at a cost: The computational requirements to make it real-time. The added efficiency and performance of all 3D SLAM algorithms demands much higher computational requirements which is a very important factor in designing a robotic system. So much research has been carried out / is going on to make LiDAR SLAM more effective and efficient in terms of the computational requirements.

This blog covers some general overview about the 3D LiDAR SLAM, comparison of two popular LiDAR SLAM algorithms in terms of performance and trends in SLAM with the advancements in modern technology and solutions.

2. 3D LiDAR SLAM: Basic Steps

The basic pipeline for 3D LiDAR SLAM involves the following steps:

Data Acquisition: In this step, the 3D LiDAR sensor is used to obtain a 3D point cloud representation of the environment.

Preprocessing: The raw point cloud data obtained from the LiDAR sensor is preprocessed to remove noise and outliers. The preprocessing step involves filtering, segmentation, and registration.

Feature Extraction: The preprocessed point cloud data is then used to extract features that can be used to estimate the robot’s pose and map the environment. The features can be extracted using various techniques such as key point detection, edge detection, and surface detection. This is an optional step since there are algorithms that utilize the whole point cloud information to create better maps at the expense of high computational costs.

SLAM Algorithm: The extracted features are then used by the SLAM algorithm to estimate the robot’s trajectory and map the environment. The SLAM algorithm uses various techniques such as pose graph optimization, loop closure detection, and bundle adjustment.

Map Optimization: The estimated map is then optimized to improve its accuracy and consistency.

Localization: The optimized map is then used to localize the robot in the environment.

The steps above are a framework of the whole process involved in 3D LiDAR SLAM but not the entire pipeline. Each algorithm for 3D LiDAR SLAM implements it in its own method contributing to all or some of the blocks of the generic pipeline.

3. 3D LiDAR SLAM: Growing trends and Applications

Even though SLAM is primarily targeted in robotics applications and autonomous vehicles/ robots, the trend is changing and now, there are various areas of applications coming into spotlight such as 3D Mapping, Digital Twin creation etc. Along with this the algorithms are also improving with time.

So, it’s better to select an algorithm for a particular application by understanding the algorithm and the target environment.

Almost all graph-based 3D LiDAR SLAM algorithms have a process called Scan Matching or Point cloud registration. Laser scan matching is a method for achieving laser SLAM data correlation. Without which the odometry estimation and the mapping part cannot work. It is defined as a set of translation and rotation parameters, so that the aligned two-frame scanning point cloud reaches the maximum overlap. Laser scan matching is divided into three categories:

- Point-based scan matching

- Feature-based scan matching

- Scan matching based on mathematical characteristics

Point-Based Scan Matching

Point based Scan Matching, also known as scan-to-scan matching is an important technique in 3D LiDAR SLAM, as it allows the algorithm to estimate the robot’s pose and map the environment in real-time using the raw point cloud data obtained from the LiDAR sensor. However, point based Scan Matching is computationally expensive and can be sensitive to noise and measurement errors in the point cloud data, making it challenging to implement in real-world applications.

Feature-Based Scan Matching

Feature-based scan matching methods are typically based on flexible features such as normal and curvature, as well as custom feature descriptors. An example of this is the HSM (Hough Scan Matching) method, using the Hough transform to extract line segment features and matching in the Hough domain. By using the Loam (LiDAR odometry and mapping) algorithm and its improved algorithm, the LiDAR odometer is realized by matching feature points to edge segments and planes, and accurate results have been achieved in various scenarios.

These algorithms would be faster than the scan matching based algorithms since they are working on the extracted features rather than the full point cloud data in scan matching. ( feature to feature correspondence)

Scan Matching Based on Mathematical Characteristics

In addition to point-based scan matching and feature-based scan matching, there are a large class of scan matching methods that use various mathematical properties to characterize scan data and frames pose changes, the most famous of which is based on Normal Distributions Transform (NDT) and its improved versions.

The main difference between point based and feature based algorithms are the following:

- Point based algorithms can provide much denser maps than feature based methods since the points other than feature points (lines, curves, normal, surface/ planes etc.) are discarded in feature-based SLAM.

- The point-based algorithms can function better in sparse point clouds, which makes it a better choice for large scale mapping such as in outdoor environments. Here the feature-based algorithms fail/ perform poorer due to the less availability of continuous/ long term visible features in the scans.

- Feature based algorithms can work great in terms of speed and are mostly Realtime performers. The computational cost is also less when compared to point-based methods

- Feature based methods can work nearly as good as point based methods in defined environments such as indoors. This, added with the low computational resource requirement and real-time capabilities, makes them a better candidate for such applications over the point-based methods.

The following section compares the performance of two popular LiDAR SLAM algorithms: Fast LIO2, a point-based mapping algorithm and A-LOAM, a feature-based mapping algorithm.

3. SLAM Algorithm Comparison

A-LOAM

A-LOAM (Advanced LOAM) is a LiDAR-based SLAM algorithm that uses 3D point cloud data from a LiDAR sensor to estimate the pose (position and orientation) of a robot in an environment and simultaneously build a map of the environment. A-LOAM builds upon the LOAM algorithm (LiDAR Odometry and Mapping), which is a widely used LiDAR-based SLAM algorithm.

A-LOAM uses a feature-based approach for point cloud registration and feature extraction. It extracts features such as planes, edges, and corners from the point cloud data, and uses them to estimate the motion of the robot and the relative pose between consecutive LiDAR scans.

Fast LIO 2

FAST-LIO (Fast LiDAR-Inertial Odometry) is a computationally efficient and robust LiDAR-inertial odometry package. Here, LiDAR feature points are fused with IMU data using a tightly coupled iterated extended Kalman filter to allow robust navigation in fast-motion, noisy or cluttered environments where degeneration occurs. Fast LIO 2 generates direct odometry by matching the scans to map on Raw LiDAR points, achieving better accuracy. Also, FAST-LIO2 can support many types of LiDAR including spinning (Velodyne, Ouster) and solid-state (Livox Avia, Horizon, MID-70) LiDAR, and can be easily extended to support more LiDAR since it does not require feature extraction.

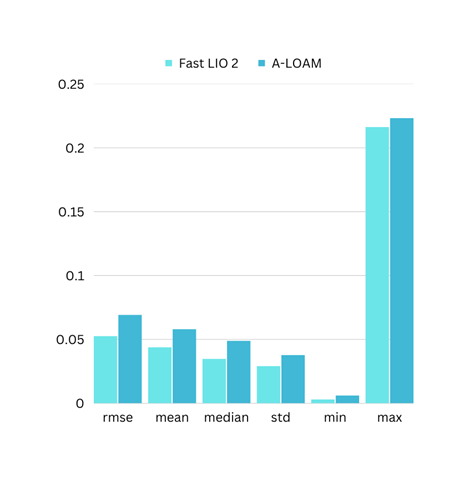

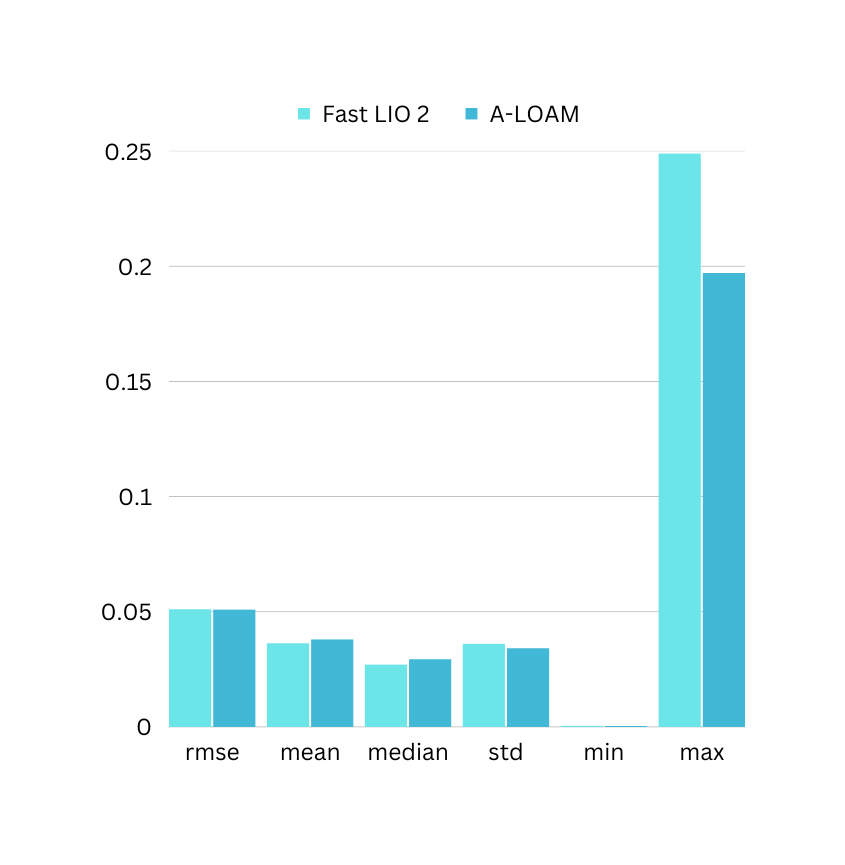

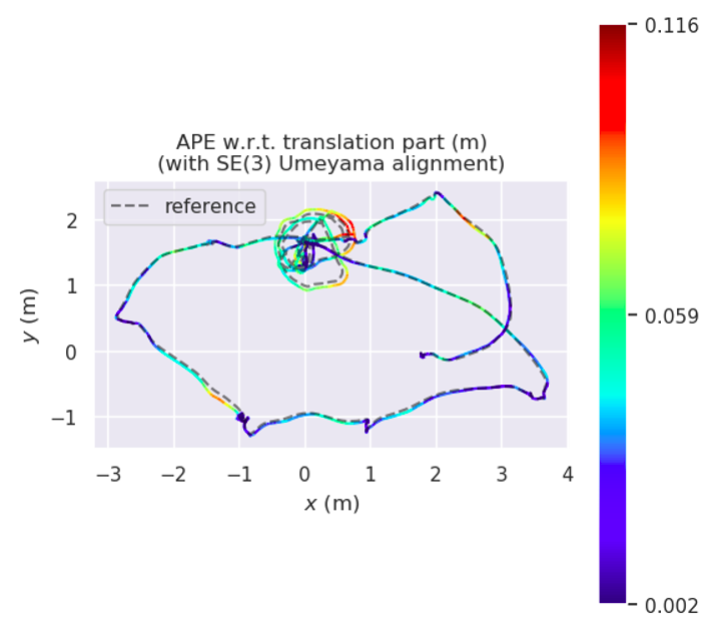

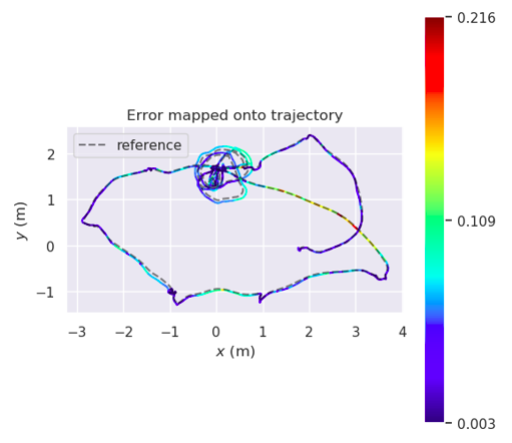

The following are the comparison results of the algorithms on HIlti Public Dataset.

Based on the above comparisons and data, both algorithms showcase some capabilities. It is to be noted that both these algorithms have no loop closure module working to correct the error. While Fast LIO works on LiDAR and IMU data, A-LOAM is working purely on LiDAR Data. Thus, selecting a clear winner is not possible, which also wasn’t the point of this comparison.

The final takeaway is that the feature-based algorithms are good for navigation in structured environments with good number of features to grab, while point based algorithms are suitable for precise mapping. The speed of operation is also a parameter to consider and it’s promising that the point base algorithms are coming close to that of the feature-based algorithms.

4. The Future of autonomous Navigation (LiDAR SLAM)

Technology has been growing rapidly and more and more algorithms are coming out by making use of it. From sensor fusion to advanced hardware level acceleration, SLAM algorithms are also getting benefitted from that.

From the point where a single LiDAR was used, now SLAM systems have evolved to use multiple sensors of different and same kinds and perform much faster at near-real-time speeds. The usage of cameras, Inertial measurement systems (IMU), other sensors such as radar, in SLAM has been gaining popularity and solutions requiring high accuracy can rely on sensor fusion techniques that make use of them.

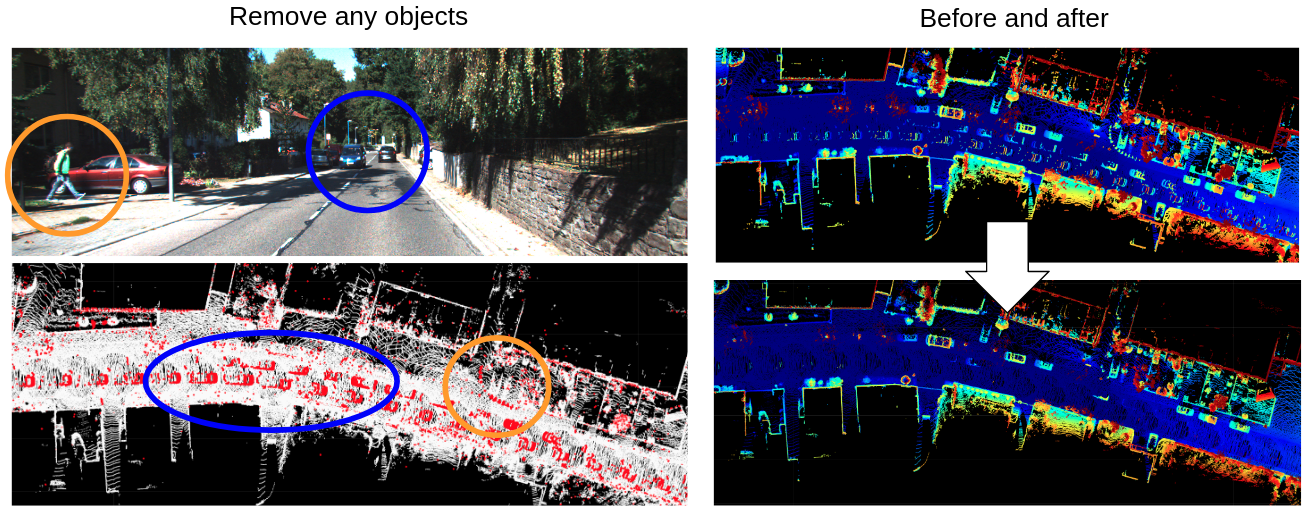

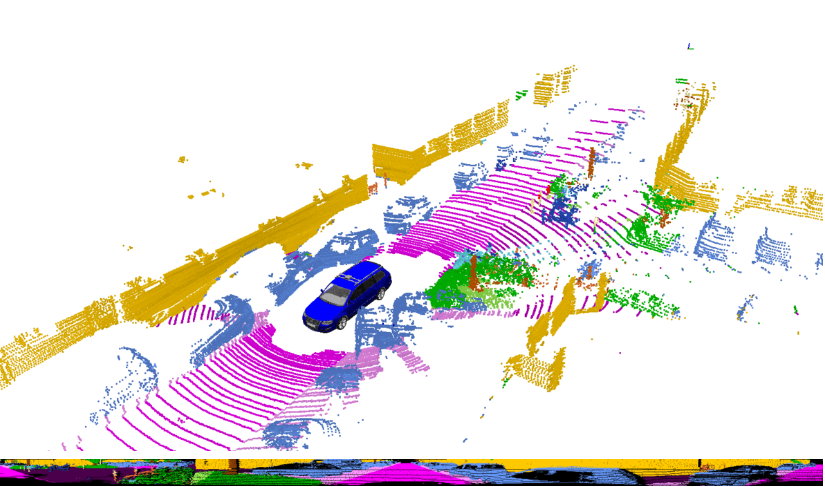

The advancements in deep learning are also enhancing the way in which maps are built using 3D LiDAR. From classifying objects in maps/ point clouds to removing dynamic obstacles and finding loop closures, deep learning is also finding its place in the slam domain.

The world of 3D LiDAR SLAM is getting more exciting as new sensors, new applications and new possibilities are coming out every day. 3D perception is the way for most of the outdoor robotics application and with the growing complexities of the indoor scenarios, 3D LiDARs would replace 2D sensors to add more efficiency and quality to the perception capabilities of the robots. Also, the advanced hardware acceleration capabilities of new –age compute platforms would help the algorithms perform better and faster.

5. Conclusion

It’s interesting to see how 3D SLAM algorithms are playing a crucial role in providing safer navigation solutions for autonomous robots. Moreover, they are also proving useful in a wide range of other applications as well. While there are several methods to achieve 3D SLAM, the ones involving 3D LiDARs are found to be much more efficient than the rest. Of course, there are limitations and drawbacks to using this technology, but there are workarounds that can be employed to overcome them. With the current technology available, there are a lot of possibilities to explore, but it’s important to keep in mind that the target application is the ultimate parameter when designing a localization/mapping solution using SLAM. It’s exciting to see how advancements in 3D SLAM will continue to shape and enhance the field of robotics and other industries that use this technology.

Ignitarium is a leading player in the field of 3D LiDAR technology, and we have spent the past four years perfecting our skills in 3D mapping, localization, and perception. Our expertise in using various types of 3D LiDARs sets us apart from the competition, and we have successfully and seamlessly integrated different sensors such as LiDAR, camera, IMU, radar, and more to create a comprehensive picture of the environment. We are also experts in navigating and mapping even the most complex scenarios, including GPS-denied environments.

One of our unique strengths is our deep learning-enabled perception capabilities, which enable us to adapt to various challenging requirements in autonomous navigation for both indoor and outdoor applications. By combining 3D LiDAR and other sensors, we can deliver exceptional results for our clients.