- Benoy Antony

- October 4, 2022

Realtime 60 FPS Ultra HD Video recording solution using Xilinx ZynQ UltraScale MPSoC

The advancement of technology has made the 4K ultra high definition (Ultra HD) imaging the main standard for today’s multimedia products. It is now used in live sports streaming, high resolution video conferencing and even in the medical field. The high resolution provides a more immersive viewing experience and reduces blocking artefacts when viewed on a bigger screen. However, these 4K Ultra HD systems need to overcome many technical challenges like high memory utilization, data throughput rate and computation complexity. For example, a 4K frame size is 3840 x 2160 pixel, in NV12 format at 60Hz would require a data rate of approximately about 720Mbytes/sec. This requires a high-performance system to process 4K frames in real time. Another bottleneck is power consumption, particularly for embedded devices. Being low power yet high performance, FPGAs have shown a strong potential to tackle these challenges. In this blog, a real time Ultra HD video recording @60FPS solution is explained using ZynQ UltraScale MPSoC.

Design Details

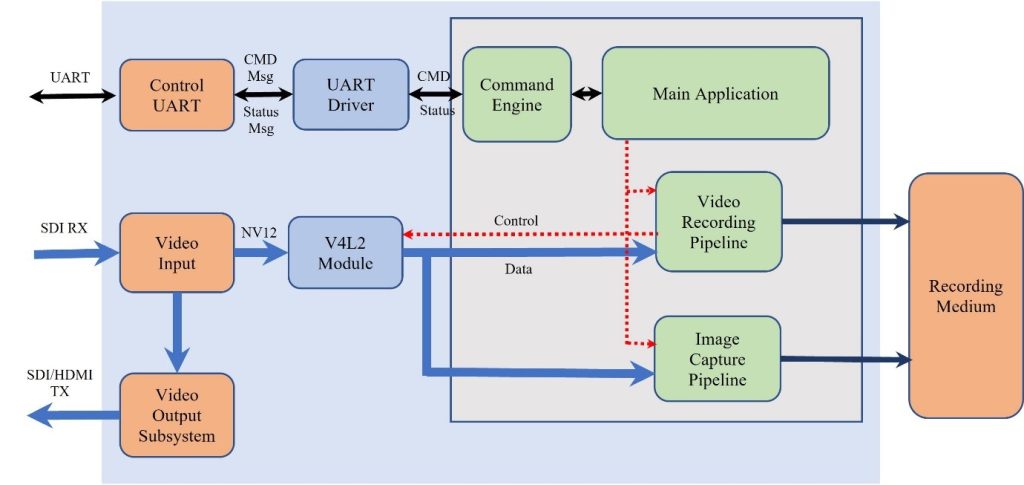

The functionality of the Ultra HD video processing is split between the Programmable Logic and the Processing system of Zync Ultra. The computationally intensive modules are offloaded to programmable logic to achieve real time processing. In the below use case, a real time video display is also incorporated along with the video recording to provide lossless feedback to the data/image being recorded.

The use case here describes two video input paths inside the Programmable Logic, one for video recording and one for the video display, called recording and pass-through path respectively. The SDI RX subsystem is common for both paths, but the pass-through works with yuv4:2:2 format to preserve more colour information, whereas the recording path works with YUV 4:2:0 for reducing the data throughput.

Passthrough (Display)

The passthrough or the display path is completely implemented in the programmable logic. The video stream flows through the SDI RX, SDI to video bridge, AXI4 video bridge and into the PL DDR via DMA block. The SDI TX or the HDMI TX subsystem is fed the same data via DMA with subframe delay. A custom finite state machine is added to maintain the sync between the RX and the TX subsystem, which continuously monitor the relative speed between the two parts and adjusts the speed of the offending party. The programming and/or setting up these submodules are performed by the software running on the processing side of the Zync Ultrascale MPSoC. The software modules run as part of the bootup process and hence the display comes up as soon as the bootup process is complete.

Recording

The two main bottlenecks observed in high resolution high-rate video recording is the computation intensity needed to perform the H264/H265 compression scheme and the frame buffer management. This design offloads the computationally intensive video compression to the Video Codec Unit (VCU) integrated in the programmable logic of the ZynQ Ultrascale. The software, recording application, running on the processing side of the ZynQ is responsible for configuring the VCU, service interrupts and coordinating the data flow. The SDI video stream coming in via the SDI RX subsystem is moved to the PS DDR through DMA block available for this sole purpose. This DMA is different from the earlier DMA used for the passthrough path, thereby decoupling the live display and recording. This avoids any break or glitch that might be observed if ever the video recording path gets slower than the input video rate. Software modules are required to complete the recording path, co-ordinating the transfer of the image frames in PS DDR to the VCU and to receive the encoded bitstream from the VCU and saving it to a recording medium or file. The managing the input image frames, a very thin V4L2 diver would suffice, utilizing the Linux videobuffer2 frameworks. The Linux videobuffer2 framework will do most of the heavy lifting regarding the data buffer management. The custom V4l2 driver just needs to configure and start the DMA during STREAM-ON and turn it off during STREAM-OFF. The V4L2 driver also provides standard Linux V4L2 interface to any user space application to manage the video frame buffers.

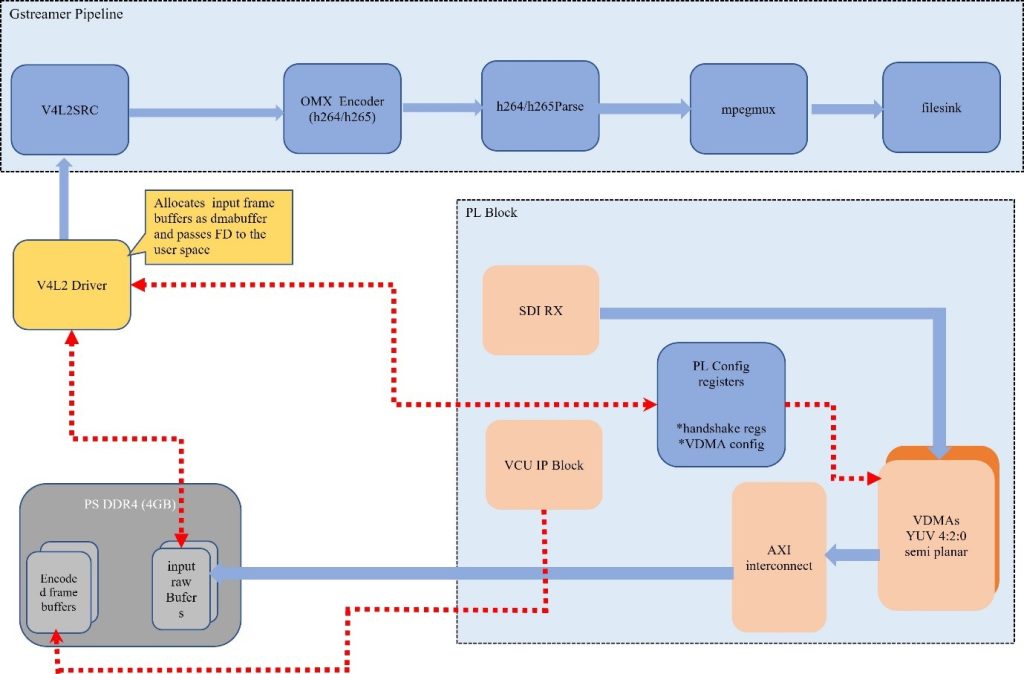

Xilinx provides the VCU control software, OMX codec and the Gstreamer plugin for the VCU. The application has a choice of which layer it would like to utilize to realize the recording pipeline. For the current design, it is decided to develop the application based on Gstreamer library. The reason being Gstreamer provides a ready-made facility for buffer and control flow, the application developer just needs to link the different blocks (plugins) and start the pipeline, the actual data and control flow gets managed by the Gstreamer library. The design uses the standard V4L2Src, H264/265 parse, MP4mux and the OMX encoder plugins provided by Xilinx as part of their BSP. The figure below explains the Gstreamer recording pipeline.

The other aspect that causes less than desired results for high-rate video recording is the frame buffer management. Typically, in most video recording pipelines, buffer copy is involved at different processing modules where the input buffer is copied into a local buffer for processing. Ordinarily this would not be such a serious issue, but when it comes to high resolution and high-rate video recording, the buffers involved are in order of tens of MBs for a single buffer. For example, a raw YUV 4:2:0 frame would take 8MB of data, and at 60FPS it would imply close to 500MB of data. Now if buffers are copied at each stage of the pipeline, it would lead to a tremendous waste of CPU’s time and resources. Thankfully Linux already provides a mechanism to overcome such issues called the “dma_buf.” Xilinx encoder plugin also supports “dma_buf” interface. This design utilizes both these facilities to implement truly zero copy buffer management in the video recording pipeline. The videobuffer2 has the option to choose different memory operations based on the need of the driver developer. Videobuffer2 will utilize standard Linux DMA buffer allocation schemes for allocating the video frame buffers in the video queue. v4l2src plugin from Xilinx, has the option to set the buffer interface like MMAP, DMA_BUF etc. The design sets the buffer interface option of the v4l2src plugin as DMA_BUF which basically means that instead of the pointer to the buffer a file descriptor for the physical memory is passed around in the user application. When this “dma_buf” handler reaches the vcu encoder plugin, it converts this file descriptor handler into the actual physical memory address and process the frame. This makes the video recording pipeline truly zero copy, the CPU is never needed to access the contents of the video frame data in its entire lifetime.

Efficient and intelligent offloading of the computationally intensive and critical functions to the Programmable logic of the ZynQ MPSoc enables to achieve live display and real-time recording of 4K Ultra HD video at 60 FPS without any impact in video quality and frame loss. The Processing system is made to function as a controlling block just to oversee the function of the critical modules in the Programmable logic. If one wishes to add live streaming capability, it can be easily achieved by adding rtsp stream pipeline. The only change required to the current application would be an introduction of a “Tee” element after the h264/h265 element in the recording pipeline to split the pipeline into the recording path and streaming path. Overall, the ZynQ Ultrascale MPSoC is a terrific platform to achieve high quality video streaming/recording or capture applications.

Ignitarium has been working with customers realizing next gen video-based solutions in domains such as Automotive, Consumer and Medical sectors. Over the years, we have built expertise in Gstreamer, Codec2 and V4L2 frameworks which are the backbones for video solutions. These solutions can easily be extended to do post processing using AI techniques on the video data generating meaningful data.