- Pavan Kumar

- March 13, 2023

Solar Panel Defect Detection Using AI Techniques

1. Introduction

Solar energy is a source of clean energy, naturally harnessing the power of the sun. When solar panels are deployed to generate electricity, greenhouse gases are not emitted into the atmosphere. Since the sun is an infinite source of energy, solar powered electricity can be considered to be near in-exhaustible. Solar panels are a great way to offset energy costs, reduce the environmental impact of your home and provide a host of other benefits, such as supporting local businesses and contributing to energy independence.

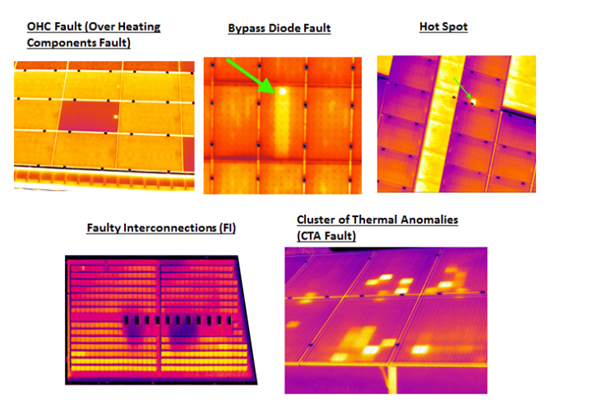

For all the benefits that solar panels provide, they are not without their own problem areas. Solar modules are susceptible to various kinds of defect mechanisms – some observable during their manufacturing process while others develop over time as they are deployed in harsh environments. In addition to defects such as micro-cracks and cross-cracks, solar panels are prone to material deterioration, diode failures and hotspot formation. Fig 1. shows a few example anomaly types. These result in either reduction in conversion efficiency or outright failure of a panel wherein it fails to convert sunlight into electricity.

2. Problem Statement

In order to guarantee efficiency of electricity generation, solar farm operators have to inspect individual panels at regular intervals. This is a very time and effort consuming process since these solar farms often are spread across tens of square miles of territory. Historically, these inspections were conducted via 100% manual labour with human inspectors climbing on top of each panel via rope-and-crane methods. This gave way to drone based image capture using camera systems; however, the analysis of the captured footage was still done by human inspectors. Due to the vast amount of data that the human operator had to process, the results were prone to error as well as timeliness of anomaly detection was often compromised. Recent advances in image processing and compute capability allowed the use of automatic detection techniques to find and localize defects. Ignitarium’s TYQ-i™ platform performs automated defect detection using sensor data (RGB or thermal camera data in the solar inspection use case) as input and a suite of complex computer-vision (CV) enhanced Deep-Learning (DL) algorithms. In the subsequent sections, we describe the workflow for the AI component of the solar panel anomaly detection software pipeline.

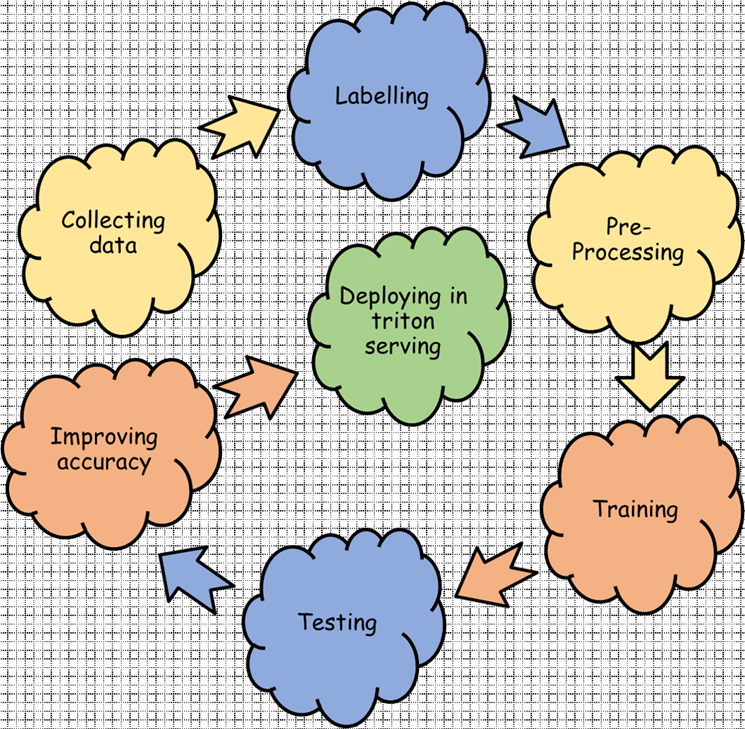

3. Defect detection development flow

3.1 Data Collection

At the PoC stage of the project, a small set of a few hundred images, that were representative of the type of solar panels under consideration were acquired using a drone carrying a thermal camera payload. These were used to experiment with the various pre & post processors and AI models. The completion of the PoC phase enabled adjusting the data acquisition process in terms of fine-tuning various parameters such as input resolution, drone angle-of-attack, flight path of drone, image overlap percentage, etc. Post fine-tuning, the data collection phase proceeded to acquire a much larger data set that could be used for comprehensive training.

3.2 Data Annotation

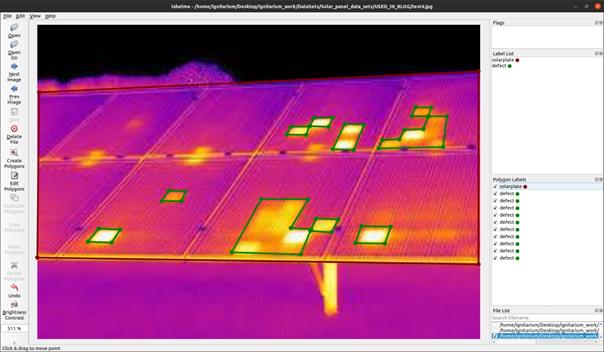

The training set was labelled semantically to cover all defect classes of interest. Fig 3 shows an example labelled frame capturing panel defects via our customized LabelMe annotation tool.

At this stage, labels are created and stored in JSON format. In this sample, two labels are shown in the Label List panel on the right.

- solarplate

- defect

solarplate: The solarplate (labelled in red) is the parent of the damage node.

defect: The defect (labelled in green) is the child of the solarplate.

Though annotation is done using tight contours, before moving to the training stage, pre-processing of data is an important step during the development of Deep Learning models.

3.3 Pre-Processing

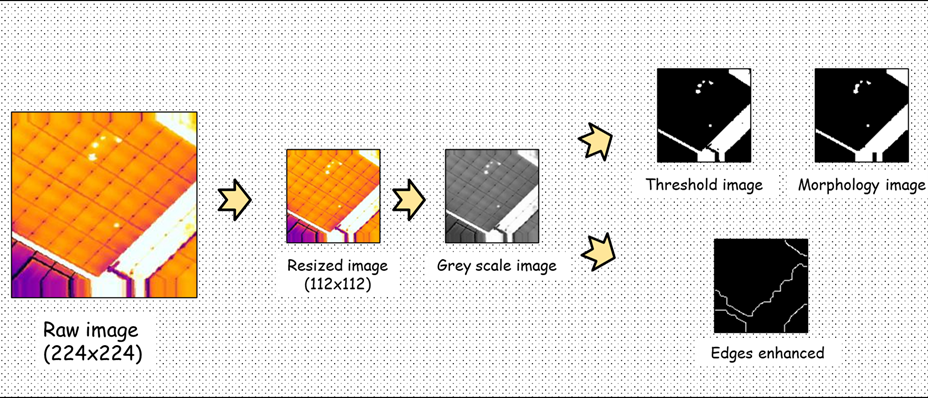

Image pre-processing is a method to transform raw image data into clean image data, as most of the raw image data contains noise, missing or inconsistent information. Missing information means lack of certain attributes of interest. Inconsistent information means there are some discrepancies in the image. The purpose of pre-processing is to enhance the image to reduce chances of false feature identification as well as to improve image characteristics vital for downstream processing.

3.4 Model architecture

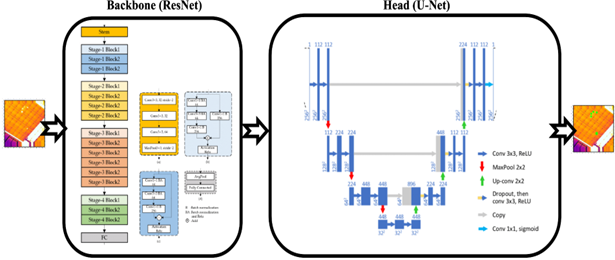

A variant of ResNet-UNet is utilized as the model architecture. In this architecture, ResNet is the backbone of the model and UNet is the head part of the model. Image information is passed to the backbone first (ResNet) following which the extracted features are passed to UNet. Finally, the head (UNet) is used for performing the segmentation task.

3.5 Training

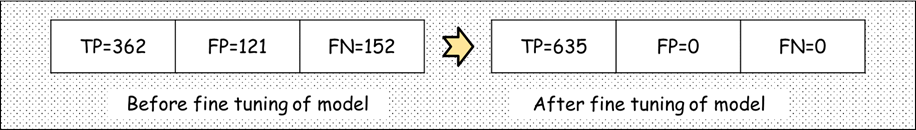

Using the selected model architecture we trained the dataset. Initially, before fine-tuning the model, true damage detection was not good enough, in addition to the presence of many false detections.

| Before Fine tuning model | Fig 6a: Model detections on dataset before fine tuning model |

| After Fine tuning model | Fig 6b: Model detections on dataset after fine tuning model |

3.6 Testing (add CF matrix and inform the model accuracy on the data)

Post training, the testing criteria used for evaluating the training process is by evaluating the Confusion Matrix on multiple previously un-seen datasets.

3.7 Improving accuracy

As mentioned earlier, the model results on the test dataset before the fine-tuning step was not satisfactory. Many approaches were implemented to improve the detection accuracy. Among them, a few approaches that provided good results were negative image addition and active augmentation techniques. While these methods increased the dataset size significantly, the accuracy improvements were commensurately significant as well.

3.8 Deploying via Triton serving

Once a model is developed, it is deployed using “serving” methods. Usually, TensorFlow Serving is used for TensorFlow models and Torch Serving is used for PyTorch models. We used the Triton Inference Server from NVIDIA; this is an open-source inference serving framework that helps to standardize model deployment in a fast and scalable manner. It was observed that our TensorFlow model running on the Triton Inference Server ran almost 40 times faster than the same model on native TensorFlow Serving. Hence, model deployment was done using Triton Serving utilizing quantisation techniques that were suitable for the specific NVIDIA GPUs that were used for inference.

4. Conclusion

With a judicious mix of controlled data capture methods, precise data labeling, purpose-built pre and post processing components, advanced Deep Learning models and a high-performance model serving pipeline, accurate detection of various classes of defects that plague solar panels deployed in vast clean energy farms was achieved.

References:

- Wang, Shuai & Xia, Xiaojun & Ye, Lanqing & Yang, Binbin. (2021). Automatic Detection and Classification of Steel Surface Defect Using Deep Convolutional Neural Networks. Metals. 11. 388. 10.3390/met11030388.

- Silburt, Ari & Ali-Dib, Mohamad & Zhu, Chenchong & Jackson, Alan & Valencia, Diana & Kissin, Yevgeni & Tamayo, Daniel & Menou, Kristen. (2018). Lunar Crater Identification via Deep Learning. Icarus. 317. 10.1016/j.icarus.2018.06.022.

- Figueiredo E, Park G, Farrar CR, Worden K, Figueiras J. Machine learning algorithms for damage detection under operational and environmental variability. Structural Health Monitoring. 2011;10(6):559-572. doi:10.1177/1475921710388971

- M. Faizal, R. Saidur, S. Mekhilef, M.A. Alim, Energy, economic and environmental analysis of metal oxides nanofluid for flat-plate solar collector, Energy Conversion and Management, Volume 76, 2013, Pages 162-168, ISSN 0196-8904, https://doi.org/10.1016/j.enconman.2013.07.038. (https://www.sciencedirect.com/science/article/pii/S0196890413004093)