- Srichitra S

- June 22, 2023

3D LiDAR SLAM – Localization Explained

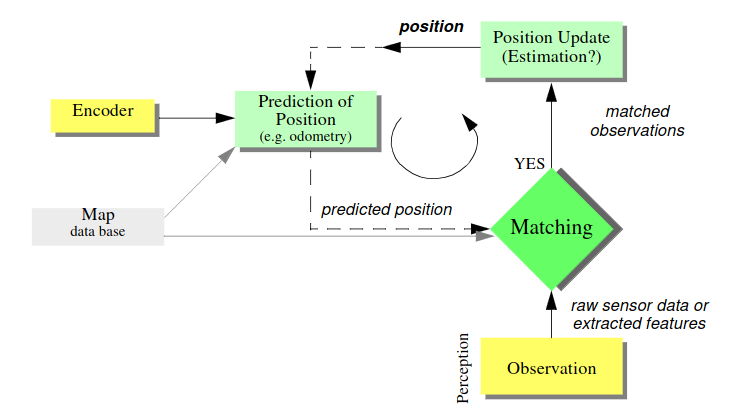

Localization is the process of determining a mobile robot’s location in relation to its surroundings. Let’s imagine that the area has been mapped out and that the robot has sensors to both monitor its surroundings and gauge its own mobility. Using sensor data, the localization problem determines the robot’s orientation and position inside the map.

Localization is a critical aspect of mobile robot navigation, enabling the robot to determine its position and orientation within its environment. While localization techniques have advanced significantly, several challenges still exist that need to be addressed for accurate and robust localization. Here are some key challenges in the localization of a mobile robot:

-

- Sensor Limitations: Sensors can be subject to noise, uncertainty, and limited range. Inaccurate or unreliable sensor measurements can lead to errors in localization. Dealing with sensor noise and selecting appropriate sensors for the environment are crucial challenges.

-

- Ambiguity and Uncertainty: Ambiguity arises when multiple poses in the environment produce similar sensor measurements, making it challenging to determine the correct robot pose.

-

- Non-Linearity and Non-Gaussian: Many real-world scenarios involve non-linearities in the sensor measurements and the robot’s motion. Linear techniques like the Kalman Filter may struggle with non-linearities, requiring more advanced approaches such as the Extended Kalman Filter, Unscented Kalman Filter, or Particle Filters. Handling non-linearities and non-Gaussian distributions is a significant challenge in localization.

-

- Computational Complexity: Localization algorithms often require significant computational resources, especially when dealing with large-scale maps or high-frequency sensor updates. Real-time performance is crucial for mobile robots operating in dynamic environments. Balancing computational complexity and accuracy is an ongoing challenge in localization.

-

- Map Representation: Efficiently representing the environment is essential for accurate localization. Different environments may require different map representations, such as occupancy grids, feature-based maps, or topological maps. Selecting an appropriate map representation and updating it dynamically pose challenges in localization.

-

- Multi-Robot Localization: When multiple robots operate in the same environment, they may influence each other’s localization due to shared sensor data or inter-robot communication. Coordinating and integrating the localization information from multiple robots while maintaining consistency is a complex challenge.

-

- Environmental Changes: Mobile robots often operate in dynamic environments where changes can occur, such as moving objects, varying lighting conditions, or changes in the environment’s structure. Adapting to environmental changes and maintaining accurate localization despite these dynamic factors is a challenging task.

-

- Initial Pose Estimation: Localization algorithms typically require an initial estimate of the robot’s pose. Obtaining an accurate initial pose estimate, especially in unknown or unstructured environments, can be challenging. Inaccurate initialization can lead to localization errors, requiring robust techniques for initial pose estimation.

Addressing these challenges requires a combination of robust sensor fusion techniques, advanced localization algorithms, efficient map representations, and adaptive strategies that can handle uncertainties and dynamic environments.

Various techniques are used to achieve localization, enabling a robot to estimate its position and orientation within an unknown environment while simultaneously building a map. Here are some common types of localization techniques used in SLAM:

-

- Feature-based Localization:

-

- Point Feature-based: This technique involves detecting and tracking distinctive points or features in the environment, such as corners or edges. The robot matches these features with the corresponding ones in the map, allowing it to estimate its pose.

-

- Line Feature-based: Instead of individual points, this technique focuses on detecting and tracking lines in the environment. The robot uses the correspondence between lines in the map and the observed lines to estimate its pose.

-

- Grid-based Localization:

-

- Occupancy Grid Mapping: This technique represents the environment as a grid, where each cell can be classified as occupied, free, or unknown. The robot uses sensor measurements to update the occupancy grid and estimate its position within it.

-

- Grid-based Particle Filter: It combines a grid-based map representation with a particle filter. Particles represent potential robot poses, and their weights are updated based on the sensor measurements and map matching.

-

- Visual-based Localization:

-

- Visual Odometry: This technique estimates the robot’s motion by analyzing consecutive camera images. By tracking visual features and comparing their positions over time, the robot can estimate its trajectory.

-

- Visual SLAM: In addition to estimating motion, visual SLAM simultaneously constructs a map of the environment. It uses visual features, such as key points or landmarks, and their correspondence with the map to perform localization, as explained in our Visual Slam: Possibilities, Challenges and the Future blog.

-

- Range-based Localization:

-

- LiDAR-based Localization: LiDAR sensors provide range measurements which can be used to estimate its position based on the observed distances to objects in the environment. Techniques like Iterative Closest Point (ICP) and Normal Distribution Transform (NDT) can be used to align the measured point cloud with the map (explained in our previous 3D LiDAR SLAM – Scan Matching Explained blog).

-

- Sonar-based Localization: Sonar sensors emitting sound waves can be used to estimate the robot position relative to objects or walls in the environment.

-

- Sensor Fusion Localization:

-

- Kalman Filter and Extended Kalman Filter: These filters combine sensor measurements with a motion model to estimate the robot’s pose. They can handle both linear and nonlinear systems, incorporating sensor noise and motion uncertainty.

-

- Particle Filters: Also known as Monte Carlo Localization (MCL) or Sequential Monte Carlo Methods (SMC), particle filters maintain a set of particles representing possible robot poses. The particles are resampled and updated based on the sensor measurements and motion model.

The most popular among these is the Kalman Filter and the Extended Kalman Filter. The blog further discusses in detail these two techniques.

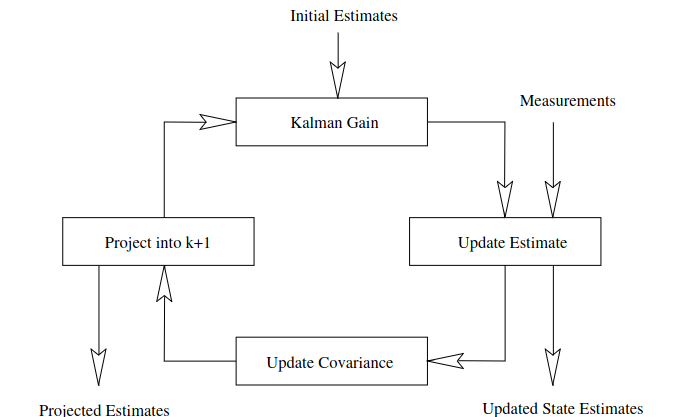

Kalman Filter

The Kalman Filter is a recursive algorithm that estimates the state of a dynamic system based on sensor measurements inclusive of noisy elements. It combines two main steps: the prediction step and the update step. In the context of SLAM, the Kalman Filter predicts the robot’s pose based on its motion model and updates the pose estimate using sensor measurements.

The Kalman Filter relies on two sets of equations: the prediction equations and the update equations. Let’s break them down:

Prediction Equations: In the prediction step, the Kalman Filter predicts the current state based on the previous estimate and the motion model.

State Prediction: x̂ₙ = Fₙx̂ₙ₋₁ + Bₙuₙ

Covariance Prediction: Pₙ = FₙPₙ₋₁Fₙᵀ + Qₙ

Here, x̂ₙ represents the predicted state at time n, Fₙ is the state transition matrix that represents the robot’s motion model, Bₙ is the control input matrix, uₙ is the control input, Pₙ is the predicted covariance matrix representing the uncertainty of the state estimate, and Qₙ is the process noise covariance matrix.

Update Equations: In the update step, the Kalman Filter incorporates sensor measurements to correct the predicted state.

Kalman Gain Calculation: Kₙ = PₙHₙᵀ(HPₙHₙᵀ + Rₙ)⁻¹

State Update: x̂ₙ = x̂ₙ + Kₙ(yₙ – Hₙx̂ₙ)

Covariance Update: Pₙ = (I – KₙHₙ)Pₙ

Here, Kₙ is the Kalman Gain that determines the weight of the measurements in the state update, Hₙ is the measurement matrix that relates the measurements to the state, yₙ is the sensor measurement, Rₙ is the measurement noise covariance matrix, and I is the identity matrix.

In SLAM, the Kalman Filter is used for state estimation and localization by integrating sensor measurements (e.g., from cameras, LiDAR, or GPS) with the robot’s motion model. The motion model predicts the robot’s pose, while the measurement model relates the sensor readings to the pose estimate. By iteratively applying the prediction and update steps, the Kalman Filter refines the pose estimate and reduces uncertainty.

Extended Kalman Filter

The Extended Kalman Filter, an extension of the Kalman Filter linearizes the nonlinear system models. It approximates the nonlinearities using first-order Taylor series expansions and updates the state estimate based on these linear models.

The Extended Kalman Filter involves two primary steps same as Kalman Filter: the prediction step and the update step.

Prediction Step: The prediction step estimates the state at the current time based on the previous estimate and the nonlinear motion model.

State Prediction: x̂ₙ = f(x̂ₙ₋₁, uₙ)

Covariance Prediction: Pₙ = FₙPₙ₋₁Fₙᵀ + Qₙ

Here, x̂ₙ represents the predicted state at time n, f( ) represents the nonlinear motion model that relates the current state estimate and the control input uₙ. Fₙ is the Jacobian matrix of f( ) evaluated at the previous state estimate, and Qₙ is the process noise covariance matrix.

Update Step: The update step incorporates sensor measurements to refine the state estimate obtained from the prediction step.

Kalman Gain Calculation: Kₙ = PₙHₙᵀ(HPₙHₙᵀ + Rₙ)⁻¹

State Update: x̂ₙ = x̂ₙ + Kₙ(yₙ – h(x̂ₙ))

Covariance Update: Pₙ = (I – KₙHₙ)Pₙ

In the above equations, Kₙ is the Kalman Gain, determines the weight of the measurements in the state update, Hₙ is the Jacobian matrix of the measurement model h( ) evaluated at the predicted state, yₙ represents the sensor measurement, and Rₙ is the measurement noise covariance matrix.

The most popular localization algorithm would be the Extended Kalman Filter (EKF) which is widely used in SLAM to improve state estimation in the presence of nonlinearities. It enables accurate localization and mapping by combining sensor measurements (for example, from cameras, LiDAR, or range finders) with a nonlinear motion model.

By linearizing the motion and measurement models around the current state estimate, the EKF can handle the nonlinearity and update the state estimate accordingly, hence allowing the robot to navigate through complex environments and build accurate maps while simultaneously estimating its pose.

Ignitarium’s Robotics and Perception AI Software team brings expertise on enabling the software stack for Autonomous Navigation, SLAM, Localization, Path Planning and Perception, making reliable implementations a reality, hence driving up ROI for adopters in the long run.

References:

To delve deeper into the mathematical foundations of the Kalman Filter in SLAM and get more insights, Probabilistic Robotics by Sebastian Thrun, Wolfram Burgard, and Dieter Fox covers the Kalman Filter and its applications in SLAM comprehensively and provides detailed explanations, examples, and derivations.