- Vishnu Dev

- October 13, 2021

AI-enabled Social Distancing Detection

Social distancing has been found to be an effective measure to curb the spread of COVID-19. According to the Centers for Disease Control and Prevention, a minimum distance of 6 ft should be maintained between two individuals to control spread of COVID-19. As parts of the world limp back to normal and people slowly return to their workplaces and schools, the need for automatic social distance monitoring is higher than ever before. Addressing this need, our AI-enabled platform ensures accurate detection of social distancing by analysing live video streams from cameras installed in public places or work premises.

This blog presents our model of implementing this Social Distancing Detection System.

AI for Intelligent Social Distancing Monitoring

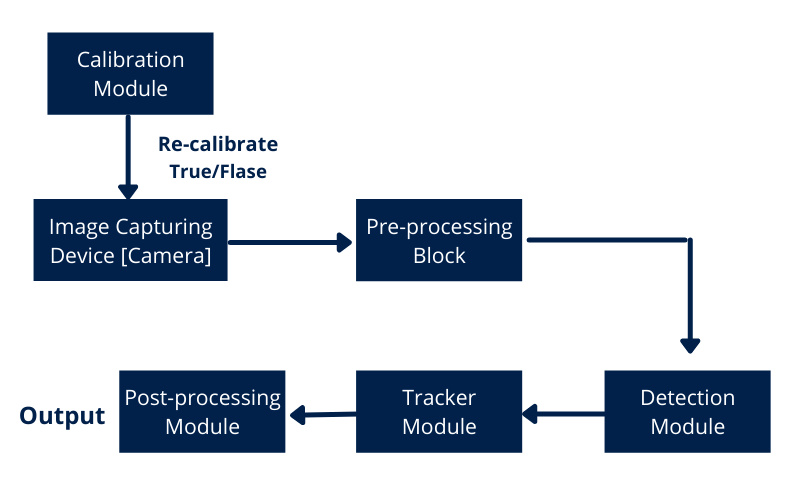

A high-level flow diagram of the AI-based Social Distancing Monitor that we implemented is illustrated below:

Data Preparation/ Annotation

One of the datasets used for training the system is the publicly available VIRAT dataset.

The Virat Dataset consists of a set of real-life video samples that are relevant for use in video surveillance applications. The dataset contains images that are collected from multiple sites from a variety of viewpoints and resolutions with actions performed by different people. This has become a benchmark dataset for the computer vision community.

Labeling the images was done using Labelme, an open source graphical image annotation tool, which is widely used by the AI community for labelling images for detection, segmentation, and classification. It also supports annotating videos.

Calibration Module

As the video stream can be captured from any angle, we have a calibration module, which will do a one-time calibration process to compute the homography, which will transform the actual view to a top-down view. Once homography is calculated, we will retain the computed transformation matrix and this will be reused until the camera angle is changed.

Calibration Step:

Fig 2: Selecting 4 points for Calibration

Collect four points from the perspective view (Fig. 2). The process of calibration assumes that every person is standing on the same flat plane. These four points are then mapped to four corners of a rectangle in the top view.

Pre-Processing Module

Since OpenCV uses BGR format, BGR to RGB conversion is done inside the pre-processing block. Next, a custom image resizing algorithm is implemented to resize the frame while maintaining aspect ratio. Finally, the image array is converted to the float data type before the pixel values are normalized.

Detection Module

We have used YOLO V3 for detection. YOLO V3 makes predictions at three different scales. Detection is done applying 1×1 kernels on feature maps of three different sizes at three different places in the network. Detections in three different scales makes it better at detecting smaller objects. The original paper can be found [Here].

Pedestrian detector which is trained on the Virat dataset is used to find bounding boxes around each and every person in the scene.

Fig. 3 YOLOV3 Architecture[Source https://www.researchgate.net/figure/Network-architecture-of-YOLOv3_fig1_339763978]

Tracker Module

For tracking an object we would start with all possible detections in a frame and will give them an ID. In subsequent frames, we will carry forward this object ID. ID will get dropped when the object disappears from frame. Here, Deep Sort Algorithm is used to track the pedestrians in the frame. Deep Sort uses features of every bounding box along with distance and velocity to track the objects of interest.

Post-Processing

From the YOLO output, we process the bounding box of every pedestrian, and estimate their (X, Y) location in the top-bottom view. For estimating the exact location, we applied transformation to the bottom centre-point of every pair of people. During calibration, scale factor is also measured to get an idea of how many pixels corresponds to 6 ft in real life. Measured distance is scaled using the estimated scale factor.

Finally, people who violate social distance are marked as red, also distance in meters is shown on a 2D map on the right side for better understanding.

Fig. 4 Demo video based on samples from the public “VIRAT video dataset”

Conclusion

AI-enabled social distancing detection systems will see wide adoption as social distancing and masking continue to be promoted as necessary protocols by WHO, despite large sections of the population being vaccinated. As more people across the world return to their regular commutes, public places, offices and schools, all these premises will require such systems to ensure safety of people.

34 thoughts on “AI-enabled Social Distancing Detection”

attache sucette dodie: pharmacie sans ordonnance en ligne – viagra gГ©nГ©rique

pharmacie pilule sans ordonnance: imovane ordonnance sГ©curisГ©e – xenical ordonnance

povidone iodine apotek: Trygg Med – apotek pГҐ nett fri frakt

collagen shot apotek [url=https://tryggmed.com/#]TryggMed[/url] hjemmetest kjГёnnssykdommer apotek

retinol apotek: apotek kontakt – apotek magnesium

https://zorgpakket.shop/# medicijnen aanvragen apotheek

grГёnnsГҐpe apotek: Trygg Med – overgangsalder test apotek

http://zorgpakket.com/# apotheke

febernedsättande medicin [url=http://snabbapoteket.com/#]SnabbApoteket[/url] ricinolja apotek

http://zorgpakket.com/# medicijnen online

pil online bestellen: Medicijn Punt – pharma apotheek

https://snabbapoteket.shop/# extrem nästäppa

hudanalyse apotek [url=http://tryggmed.com/#]TryggMed[/url] hydrogen peroxide apotek

https://tryggmed.com/# apotek spania

http://tryggmed.com/# kollagen apotek

one kundtjänst [url=https://snabbapoteket.com/#]apotek läppbalsam[/url] sola magen gravid

https://zorgpakket.com/# online medicijnen

alun pulver apotek: apotek ГҐpningstid – hallux valgus stГёtte apotek

apoteks [url=https://snabbapoteket.com/#]covidtest apotek[/url] gipsbinda apotek

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

https://zorgpakket.shop/# de apotheker

graviditetstest apotek: selvtest apotek – hostesaft apotek

http://zorgpakket.com/# online apotheek zonder recept ervaringen

apotheek online bestellen [url=https://zorgpakket.shop/#]ons medicatie voor apotheken[/url] belgische online apotheek

https://tryggmed.com/# apotek norge

munskГ¤rm apotek: Snabb Apoteket – svamp tassar hund apotek

vanndrivende uten resept apotek: Trygg Med – stГёydempende Гёrepropper apotek

ExpressCareRx [url=https://expresscarerx.online/#]rx express pharmacy hurley ms[/url] desoxyn online pharmacy

https://expresscarerx.online/# united pharmacy finpecia

https://indiamedshub.com/# IndiaMedsHub

IndiaMedsHub [url=https://indiamedshub.shop/#]mail order pharmacy india[/url] best online pharmacy india

indianpharmacy com: Online medicine order – IndiaMedsHub

https://indiamedshub.shop/# buy prescription drugs from india

ExpressCareRx [url=https://expresscarerx.online/#]ExpressCareRx[/url] losartan pharmacy