- Ashwin Joseph and Christina Kuriakose

- October 27, 2021

Light-weight People Detection on Microcontrollers using Custom Neural Networks

AI-driven computer vision systems are increasingly becoming a part of our day to day life across industrial and consumer applications. Deployment of Machine Learning (ML) models on Edge devices is also gaining traction due to the advantages of improved performance and data security as data is not sent over to the cloud for processing.

While most classical People Detection implementations use Application Processors or GPU devices for compute, there are highly cost-sensitive applications where there is a need to achieve similar functionality on low cost micro-controller (MCU) devices. One such use case is where an office appliance (eg. a printer, scanner, copier) wakes up from power-saving mode and preps itself for operation as people approach it.

In this blog, we describe Ignitarium’s Light-weight People Detection application that is part of our FLK-i Video Analytics platform. The ML-based application analyzes video footage captured by a low resolution RGB camera attached to the appliance and identifies the presence and approach of humans from within a 3 meter radius.

There are numerous methods for People Detection ranging from classical Computer Vision techniques to Deep Learning based approaches. These include Haar Cascades available in OpenCV library to SSD Mobilenet, Posenet, Openpose, Openpifpaf etc. A few good resources to learn more about these methods are included at the end of this blog. But, keeping in mind the RAM, Flash and compute constraints we had on our target MCU, we developed our own Custom Neural Network that was light enough. The subsequent sections describe our development approach.

Dataset Preparation

A large corpus of humans-in-frame data was collected from various sources – open source databases as well as via data collection exercises using the specific camera that was to be used in the target electronic appliance. Care was taken to collect data from all possible approach angles within the targeted camera Field-of-View (FoV). The collected data was carefully segregated using a combination of image processing-based and manual binning.

Labelling

The cleaned dataset was labelled by our data labelling team using standard annotation frameworks.

Fine Tuning the model

We built a custom lightweight neural network that could accurately detect if the person in the frame is near or far away.

The most challenging task was to build a Custom Neural Network that would operate within our stringent CPU frequency and RAM footprint budget. The following were several of the CNN network configurations we explored before we froze on the final model:

- Determining the ideal input image dimension – this required getting the tradeoff right so as to not lose spatial information and at the same time reduce the RAM consumed by the layers

- Exploring different color scales

- Determining the kernel size and fine-tuning the number of filters to be used as input for the convolutional layers

- Improving the model by visualizing the intermediary layer outputs

- Determining the number of repetitive blocks to re-use

Deployment on to hardware device

Once the model was ready and validated for accuracy, the next step was to deploy it on the target hardware : the Renesas Rx 64M MCU class device operating at a maximum frequency of 120 MHz and with SRAM of 512 Kbytes. The TensorFlow model was translated into C using a combination of the vendor supplied toolkit and manual scripts that we developed to implement custom layers and operators.

The output from the camera is transferred to the MCU using a parallel data capture (PDC) protocol and the YUV input pixels are converted to RGB format. The RGB image is then provided to our Person Detection Neural Network algorithm.

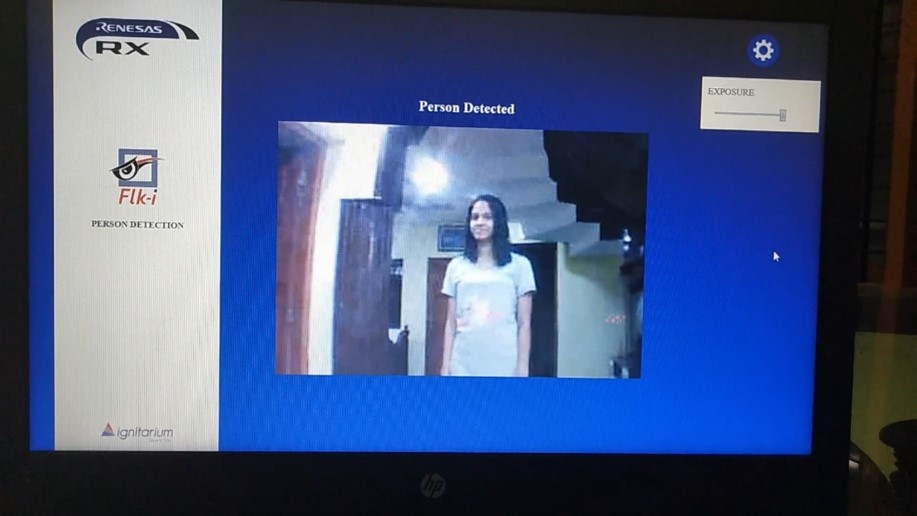

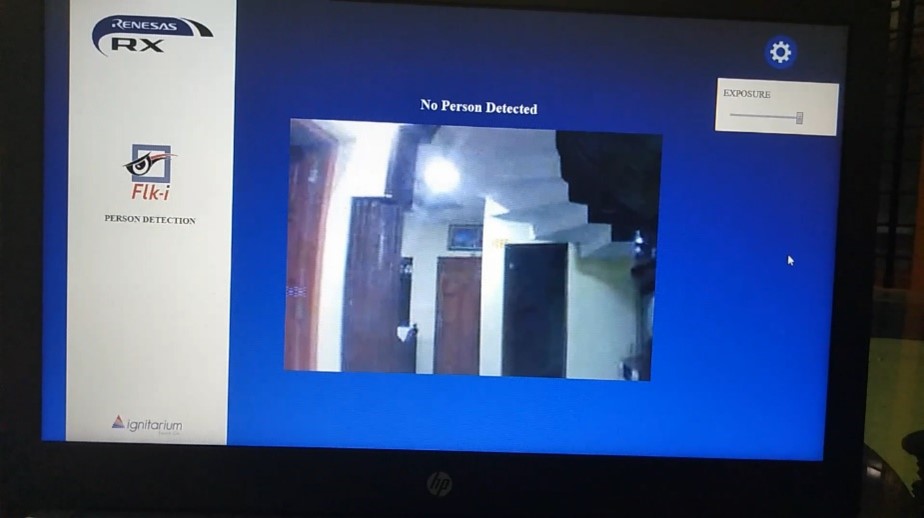

The camera output and the result of the people detection are visualized via a web page. In actual application scenarios, the detection results will trigger wake-up signals over GPIO.

Application Snapshots

Result

We were able to generate a custom machine learning model with a memory footprint of less than 200KB (uint8) that could accurately classify if a person was present in the nearby vicinity or not.

Metrics

The uint8 model took about 513 milliseconds (~2fps) to process an image.

The entire application including the image buffer, pre-processing and GUI component consumed a footprint of ~800KB. Since the device had only around 500KB of usable SRAM, the code was modularized carefully into persistent and non-persistent segments with the non-persistent sections of code dynamically loaded from ROM.

Conclusion

Lightweight Neural Network-based models can be effectively deployed on low-cost micro-controller devices to address a host of consumer appliances and industrial applications that call for the detection of people approaching them.

Resources

The following links would be helpful in learning more about some of them:

- GitHub – CMU-Perceptual-Computing-Lab/openpose: OpenPose: Real-time multi-person keypoint detection library for body, face, hands, and foot estimation

- Pose estimation | TensorFlow Lite

- GitHub – openpifpaf/openpifpaf: Official implementation of “OpenPifPaf: Composite Fields for Semantic Keypoint Detection and Spatio-Temporal Association” in PyTorch.

- OpenCV: Cascade Classifier

- Object Detection using SSD Mobilenet and Tensorflow Object Detection API : Can detect any single class from coco dataset. | by mayank singhal | Medium

39 thoughts on “Light-weight People Detection on Microcontrollers using Custom Neural Networks”

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Thank you very much for sharing, I learned a lot from your article. Very cool. Thanks.

Thanks so much for giving everyone an exceptionally marvellous chance to discover important secrets from this website. It can be very sweet plus full of a good time for me personally and my office peers to search your web site particularly thrice in one week to read the new things you will have. And of course, I’m always satisfied with all the splendid principles you serve. Some two ideas in this post are unquestionably the most effective we have all had.

Throughout the grand pattern of things you actually receive a B+ with regard to hard work. Exactly where you confused me ended up being in your details. You know, as the maxim goes, the devil is in the details… And it couldn’t be more accurate here. Having said that, let me reveal to you just what did do the job. The text is extremely powerful and that is possibly the reason why I am taking the effort in order to comment. I do not really make it a regular habit of doing that. Second, despite the fact that I can certainly notice a leaps in logic you make, I am not necessarily sure of how you appear to connect your points which inturn make your final result. For now I will, no doubt subscribe to your issue however hope in the future you actually link your dots better.

mГ©dicament cystite ordonnance: PharmaDirecte – duphaston prix sans ordonnance

http://tryggmed.com/# brodder apotek

http://zorgpakket.com/# apotheek medicijnen

forsvarsspray apotek: Trygg Med – apotek plaster

onlineapotheek [url=https://zorgpakket.com/#]dutch apotheek[/url] apohteek

https://tryggmed.com/# nitrittsalt apotek

vannrensetabletter apotek: Trygg Med – apotek storsenter

mijn apotheek online [url=http://zorgpakket.com/#]MedicijnPunt[/url] onlineapotheek

https://snabbapoteket.com/# linser apotek

https://snabbapoteket.shop/# snabbast leverans apotek

billigt pГҐ nГ¤tet: recept apotek online – apotek glasГ¶gon

apteka internetowa holandia [url=http://zorgpakket.com/#]Medicijn Punt[/url] apotheke holland

https://zorgpakket.shop/# medicatie bestellen online

medicatie online bestellen: medicijnen op recept online bestellen – medicatie aanvragen

bh 90 b: Snabb Apoteket – tablett

https://zorgpakket.com/# medicijn bestellen

pulse oximeter apotek [url=http://tryggmed.com/#]shampoo mot flass apotek[/url] epsom salt apotek

https://tryggmed.shop/# apotek koronavaksine

apotheker medicatie: online drugstore netherlands – medicatie apotheek

https://zorgpakket.shop/# medicatie bestellen

double cream svenska [url=https://snabbapoteket.shop/#]SnabbApoteket[/url] apotek medicin

apotek online: SnabbApoteket – amningsbh med bygel

https://tryggmed.com/# sovepiller apotek

isopropanol apotek [url=https://tryggmed.shop/#]apotek munnbind[/url] vaksinering apotek

https://snabbapoteket.com/# herpes apotek

apotek solkrem: Trygg Med – apotek d vitamin

https://medimexicorx.shop/# MediMexicoRx

health rx pharmacy [url=https://expresscarerx.online/#]online pharmacies in usa[/url] ExpressCareRx

ExpressCareRx: ExpressCareRx – ExpressCareRx

https://indiamedshub.com/# best india pharmacy

mail order pharmacy india: IndiaMedsHub – best online pharmacy india

http://indiamedshub.com/# top 10 online pharmacy in india

indian pharmacy: indianpharmacy com – indianpharmacy com

mail order pharmacy india [url=https://indiamedshub.com/#]cheapest online pharmacy india[/url] IndiaMedsHub

http://indiamedshub.com/# buy prescription drugs from india