OpenGL for Video Processing in Android

Introduction

OpenGL is a graphics library specification for rendering 2D and 3D graphics which achieves the required performance by using the GPU for hardware acceleration. A specification that defines APIs, implementation is the responsibility of GPU vendor. For this same reason, OpenGL APIs will behave differently on different GPUs.

Video pre and post processing is a feature that is used commonly in multimedia. By integrating various video processing algorithms to the video pipeline, the viewing experience of the end user can be enhanced. It is always a developer’s choice on how to implement the algorithm, whether it be a pure software implementation or a hardware one.

When it comes to real-time applications, the main challenge is the extra processing delay introduced by these algorithms which impact the overall performance of the video playback or recording. Software based algorithms will always be expensive, as its processing time will introduce a larger delay to the overall pipeline, and this could lead to frame drops. To overcome this, we need to implement the algorithm in hardware, and here comes the role of OpenGL. OpenGL helps us perform our computation on GPU which makes our algorithm apt for real-time applications.

Alternatives and Applications of OpenGL

The popular alternatives of OpenGL are Vulkan, DirectX, Metal, WebGL to name a few. When compared to OpenGL, Vulkan has more control over the GPU which makes it little more complex than OpenGL. Unlike OpenGL, DirectX and Metal are platform-dependent; DirectX is for Microsoft and Metal is for IOS. WebGL is an OpenGL variant that is used to bring hardware acceleration to web browsers.

OpenGL has a wide variety of use cases. The major use cases are

- Video processing/rendering applications in various frameworks such as Windows, Linux, Android, IOS, Web and GStreamer

- Game development

- The majority of visual applications where we need hardware acceleration

What is OpenGL?

As already mentioned, OpenGL is a graphics library specification, used to perform computations in GPU and it follows a server-client model:

- Client-side implementation consists of application

- Server-side implementation,

OpenGL Basic Key Words

- OpenGL Context:

OpenGL works as a state machine. Everything in OpenGL are state variables and it has corresponding states. For example, if we want to draw a red line, we first need to select the state variable colour and set its state to red. Hereafter, everything we draw will be in red.

Collection of all these states is commonly known as Context. Within an application we can have multiple contexts.

- Textures:

Textures are basically a buffer in shader which can store 2D image data

- Framebuffer:

Framebuffer is an OpenGL object to which OpenGL finally renders the data. The default framebuffer is the display/window created by the application. If we want an off-screen rendering (i.e render to a buffer), then we need to create a texture and bind this texture as the framebuffer target.

- Shaders:

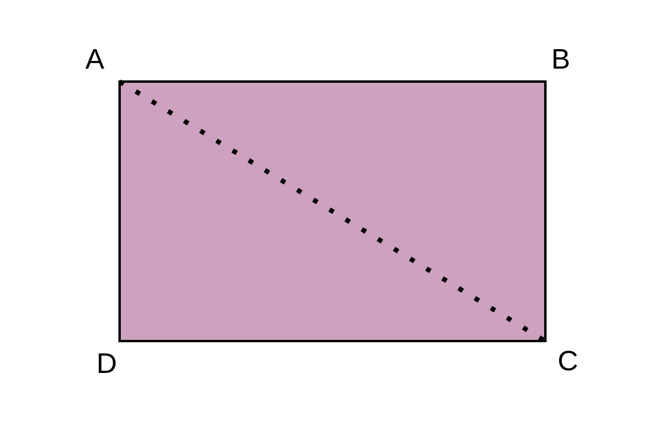

Shader is a piece of code that runs on GPU. There are many shaders involved in a rendering pipeline and out of this 2 shaders, vertex and fragment are programmable and rest all shaders are fixed. In the pipeline vertex shader comes first and then the fragment shader. To explain these 2 shaders let’s take an example of rendering a 2D image to framebuffer as shown below.

Vertex Shader

Before rendering the image, we need to define the area of framebuffer to which the image will be rendered. Here the area is the rectangle ABCD. In OpenGL every area is defined using triangles, because any shape can be constructed by combining triangles. Here we construct the rectangle ABCD using 2 triangles ABC and CDA and we use vertex shader for this purpose. We will pass the coordinates of the triangles in sequential order to the vertex shader and the vertex shader will combine these triangles and finally create a rectangle.

Fragment Shader

Vertex shader will pass the area to be rendered to the fragment shader and the fragment shader will be called for each pixel with this area. The fragment shader will have the logic to colour each pixel.

Developing a Real time Video Processing Plugin

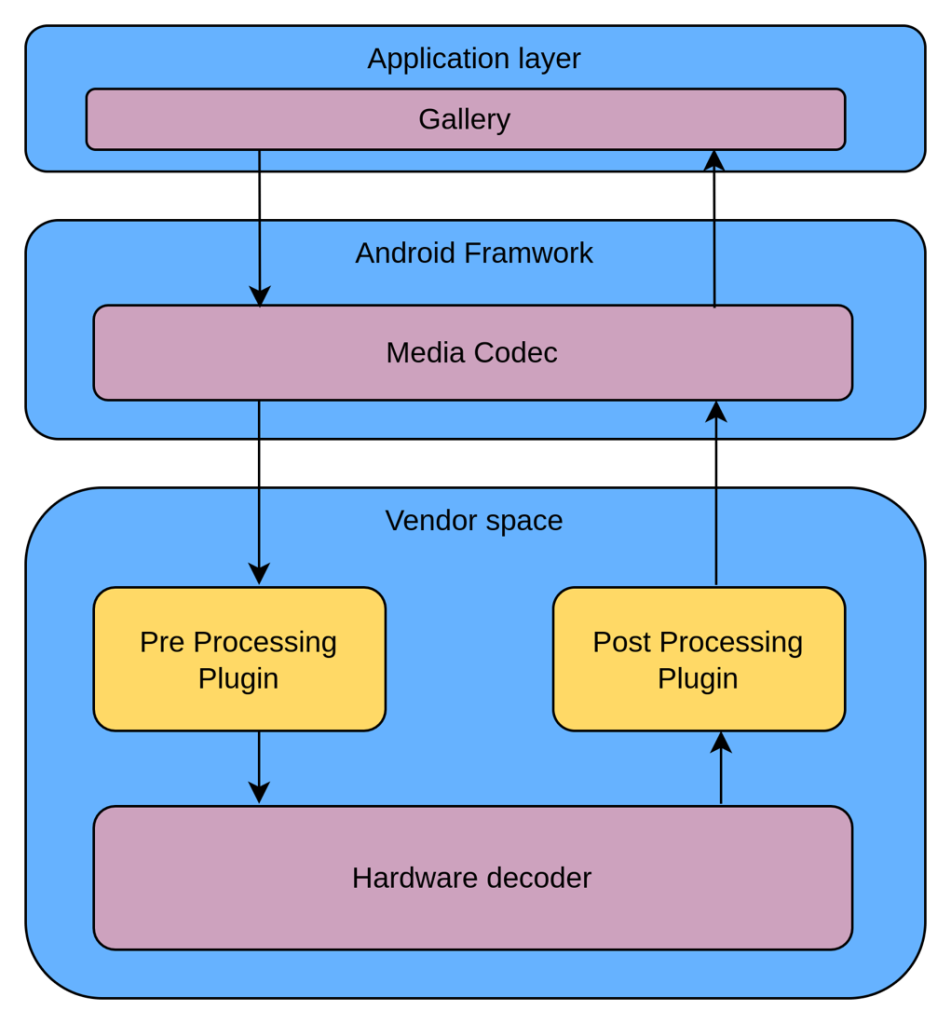

OpenGL was used to develop a real time video processing plugins in Android. Android follows codec2 framework for video processing and our plugin can be integrated to the codec2 pipeline at the vendor space.

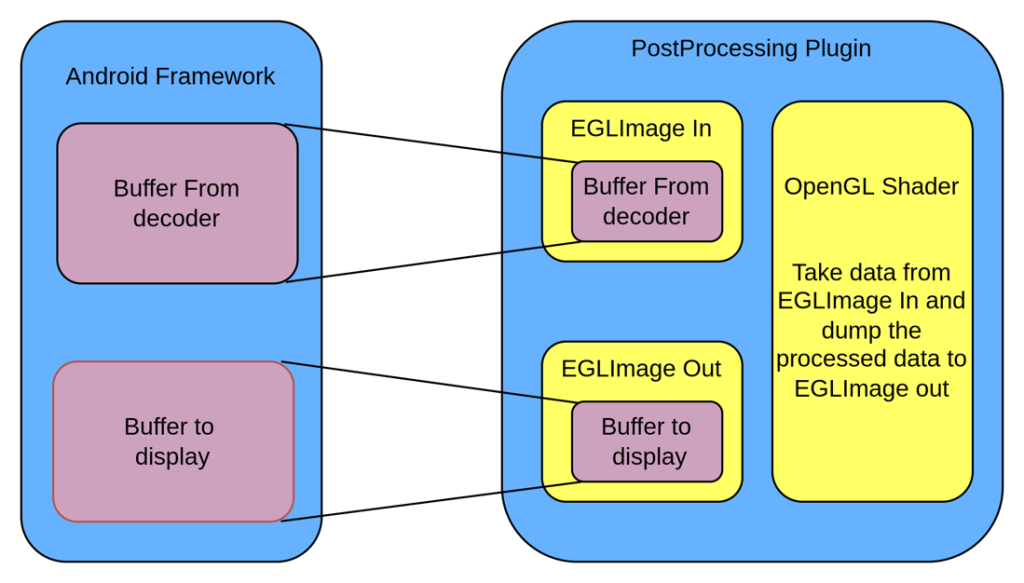

Let’s take an example of video post processing

OpenGL was used to integrate a video post processing algorithms to the android framework.

The buffer that received from the framework can be a CPU buffer or a GPU buffer depending upon the use case. This needs to be copied to GPU to do the post processing functionality. There are various techniques to do this, some of them are discussed below.

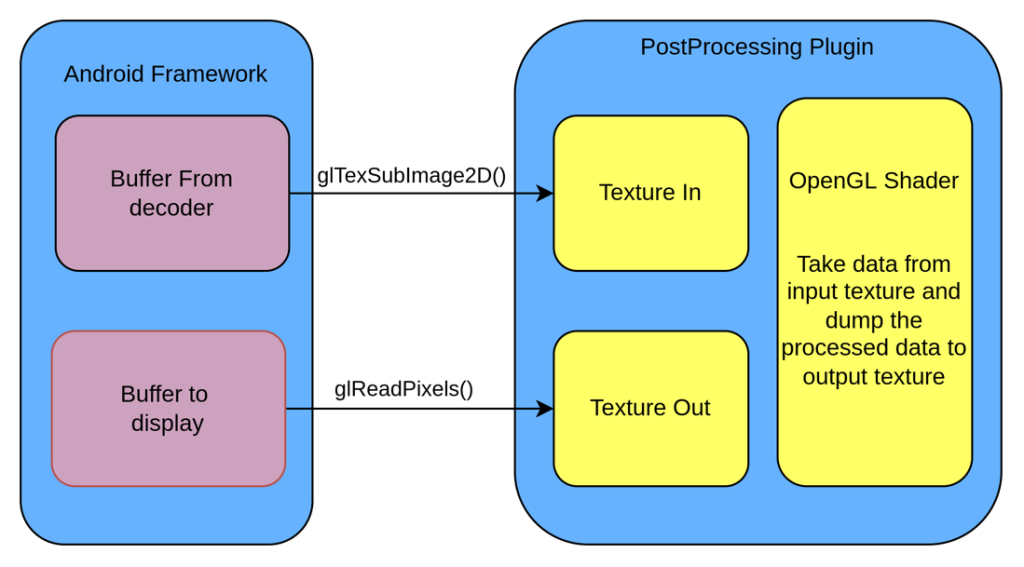

1. Conventional Approach (glTexSubImage2D() and glReadPixels())

This is the most common and simplest method to copy the data from the CPU buffer to GPU buffer. Create 2 textures (Buffers that can be accessed by OpenGL Shaders) one for input which contains the data to be processed and the other for the output to store the processed data. Using the glTexSubImage2D() API copy the raw data received from the decoder to the input texture, then using the shader do the postprocessing and dump the processed data to the output texture. The Processed data needs to be copied from the Output texture to android buffer using the API glReadPixels() and shall be passed to the framework for display.

Drawbacks

There are mainly 2 drawbacks for this approach:

- The 2 API’s glTexSubImage2D() and glReadPixels() are much expensive in terms of performance as both take a longer time than a normal memcpy(). To process a single frame, we need two copy operations and this action will lead to frame drops for FHD and UHD streams.

- Here in this design, the CPU needs access to the data pointers of the buffers and this is possible only for the normal playback. In case of secure playbacks like Netflix and Prime, the buffer usage for CPU will be restricted and hence this approach doesn’t work for secure playbacks.

2. Optimized Approach (EGLImage)

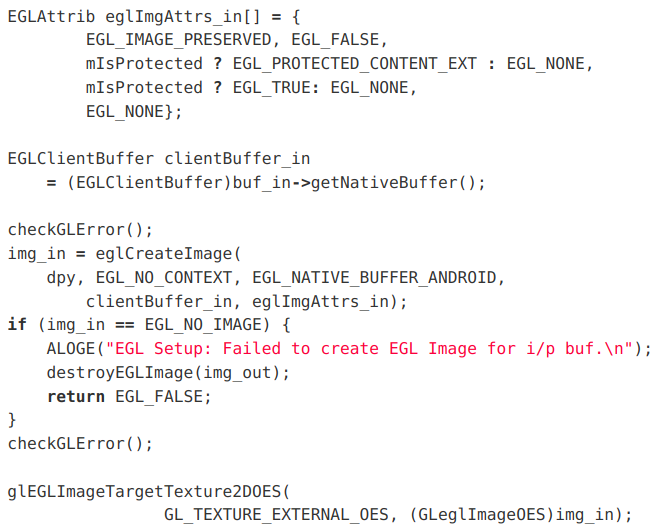

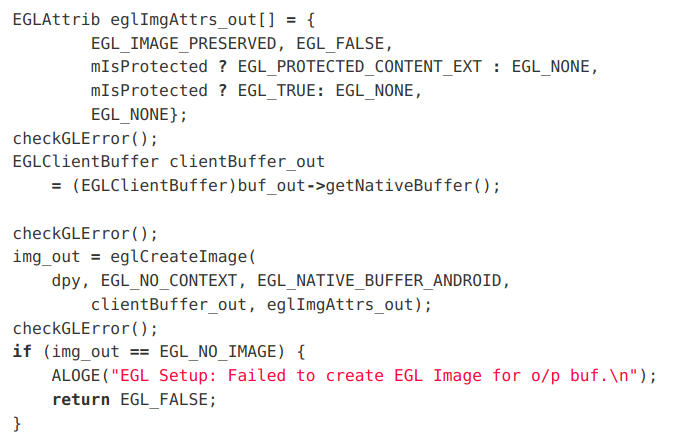

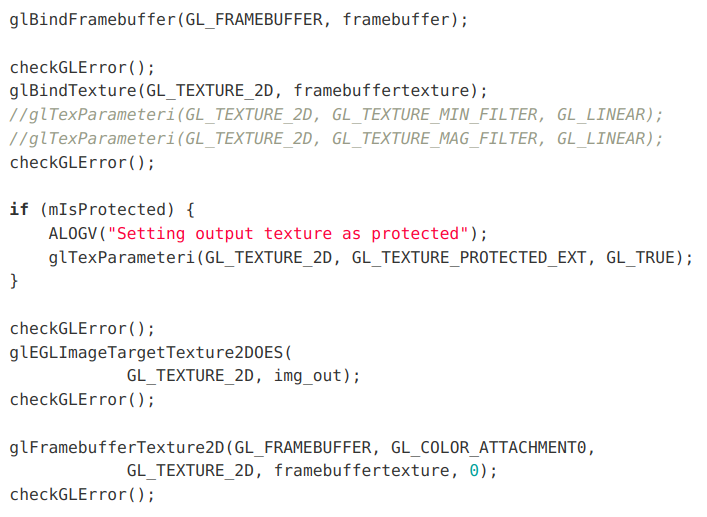

The EGLImage helps us to map the android buffers to OpenGL textures. This ensures the texture and the buffer share the same data space and hence eliminate the need for a separate data copy.

The major steps involved are:

- Create input EGLImage from the input buffer and bind it as input texture

- Create output EGLImage from the output buffer

- Bind the output EGLImage as the Framebuffer target

- Render using GLSL (OpenGL Shading Language)

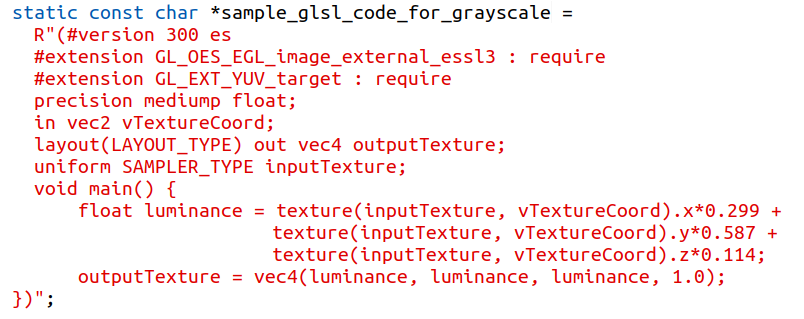

Here we have written a shader to add grayscale effect to the frame. It will take data from input texture, convert it to grayscale and dump to output texture.

Conclusion

By using EGLImage zero copy is achieved in postprocessing plugin, enabling use cases to target higher frame rates. EGLImage removes the CPU dependency of the buffer thereby enabling plugin in secure playbacks.

For the last several years Ignitarium has been developing and supporting customers on various multimedia solutions on Linux and Android. We work with product companies, MNC and startups to architect and design solutions on multimedia products. Over the years, we have built expertise on the Android framework and multimedia technologies, especially in accelerated Video rendering using OpenGL and other graphics libraries.

If you are looking for expertise in Android Multimedia Framework or other multimedia framework and specifically on 2D-3D graphics rendering, do reach out to us for a discussion.